Introduction

At Axelerant, we follow a Sprint based development model in all our managed projects. Before adopting the Xray test management tool, we were using a couple of ways like Google spreadsheets, use of custom fields like Test Case, Test steps, etc. in Jira to manage tests. However, it didn’t provide the benefits of using a full-fledged test management system and led us to choose Xray after evaluating a few other tools available in the market.

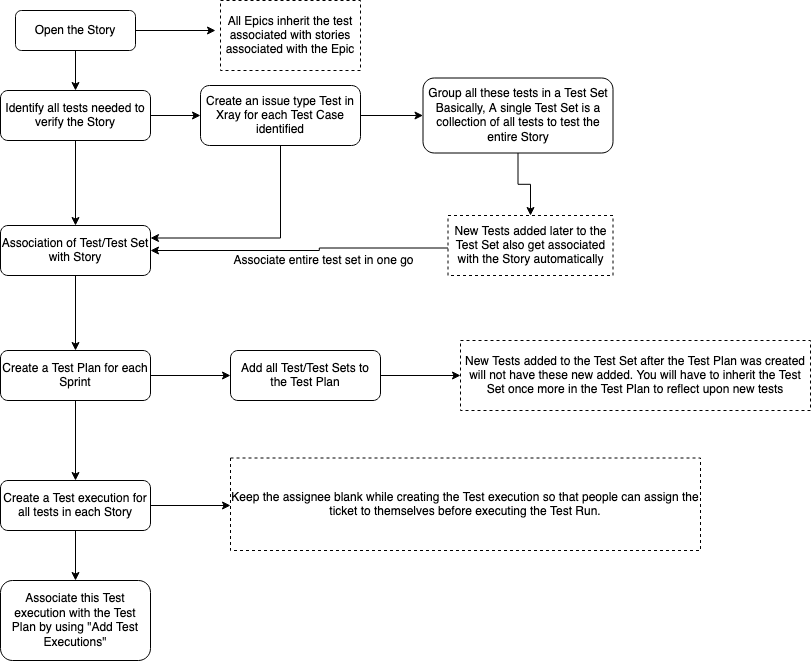

Let us now see how at Axelerant we make use of this tool in our Sprints. Assuming that we have our Epics, then detailed stories and sub-tasks in place, let’s say that our Sprint1 is planned and well-groomed. Here are the steps that we follow in each Sprint.

Steps Followed in Each Sprint

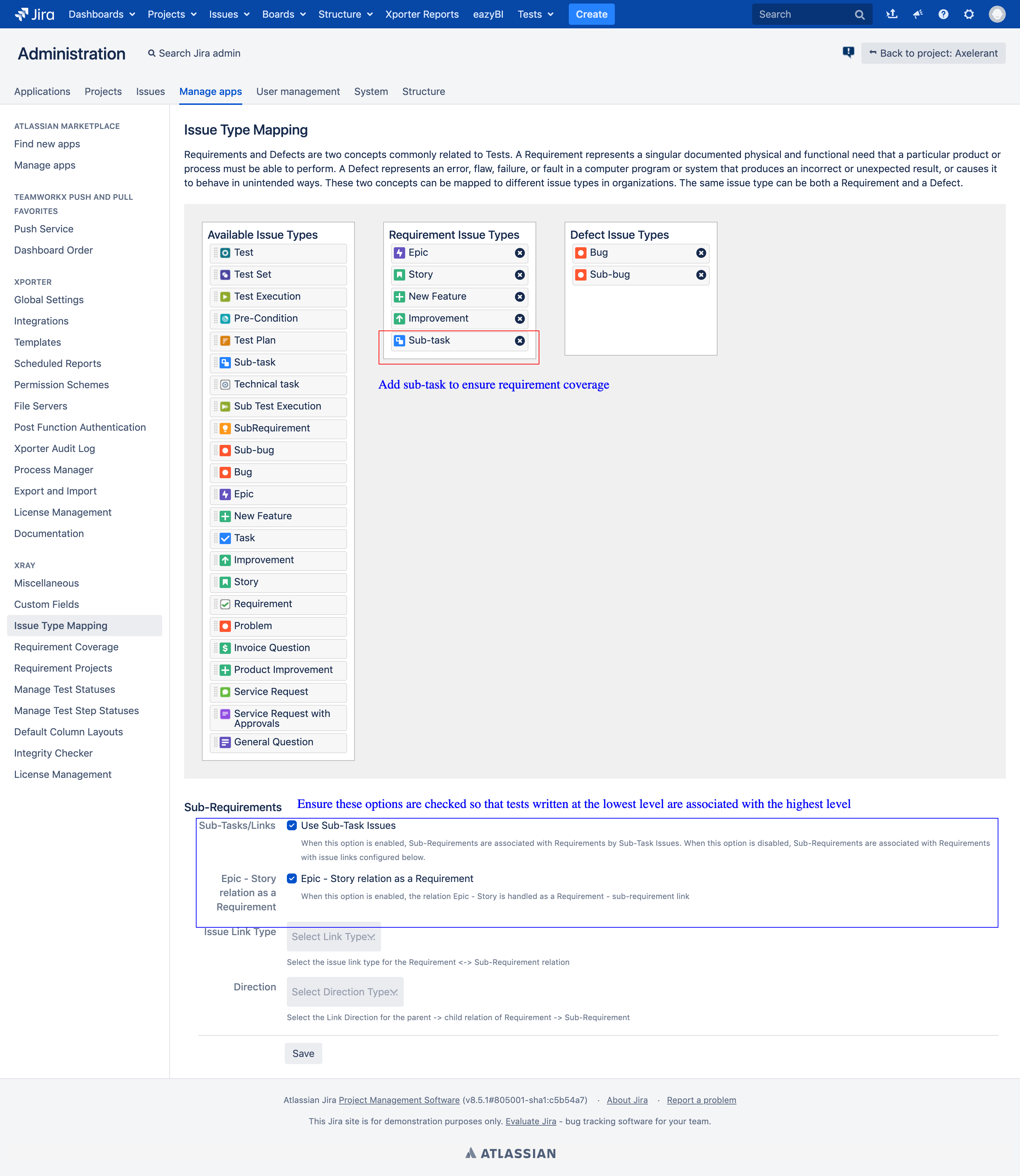

- QA team picks up stories to write tests against the Acceptance Criteria. In case the story has sub-tasks, then tests are defined at the sub-task level instead of at the Story level. Ensure that Sub-task, too, is added in the Requirement Issue Types column on the Issue Type Mapping configuration page. Please look at the annotation marked in red color in the image below.

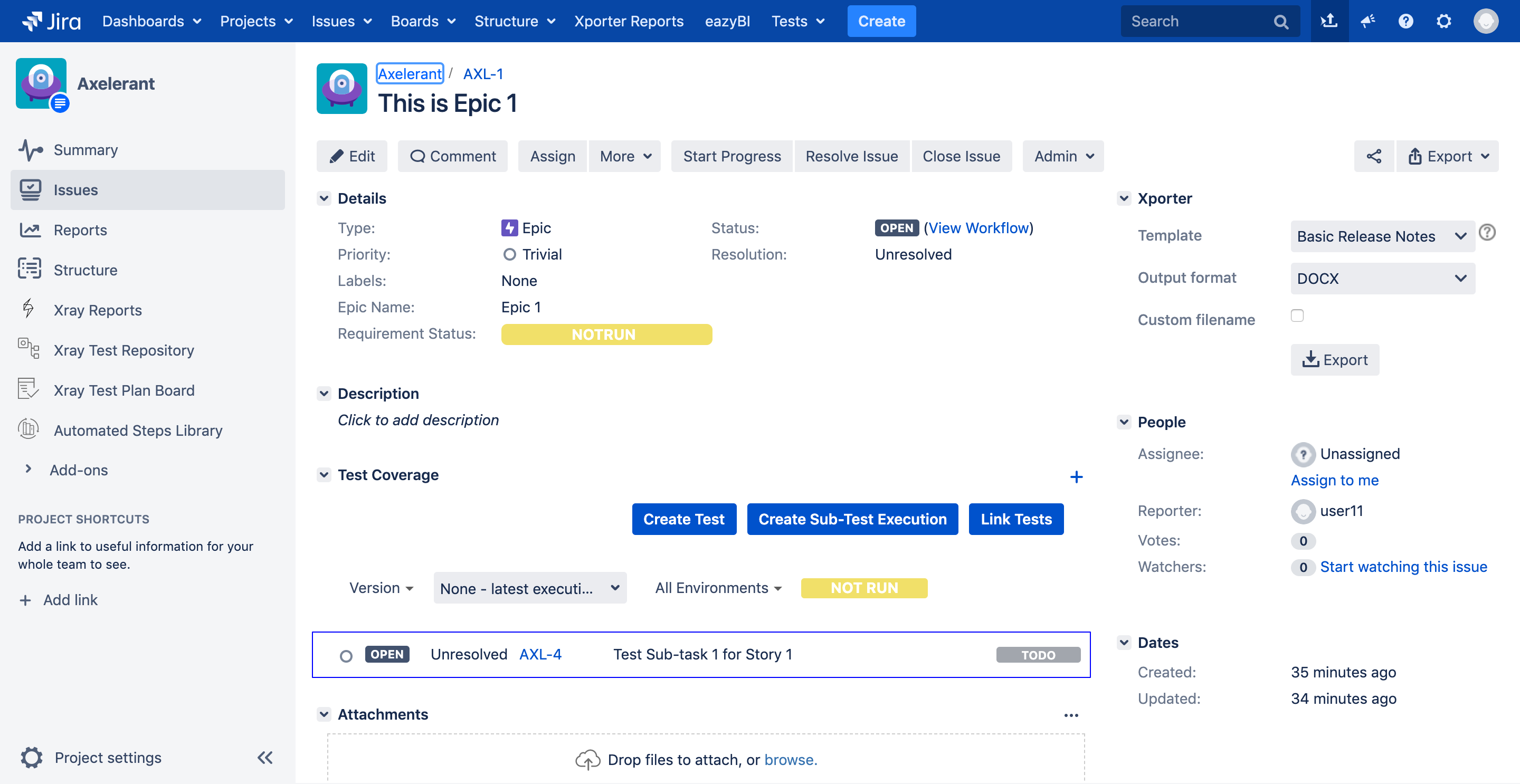

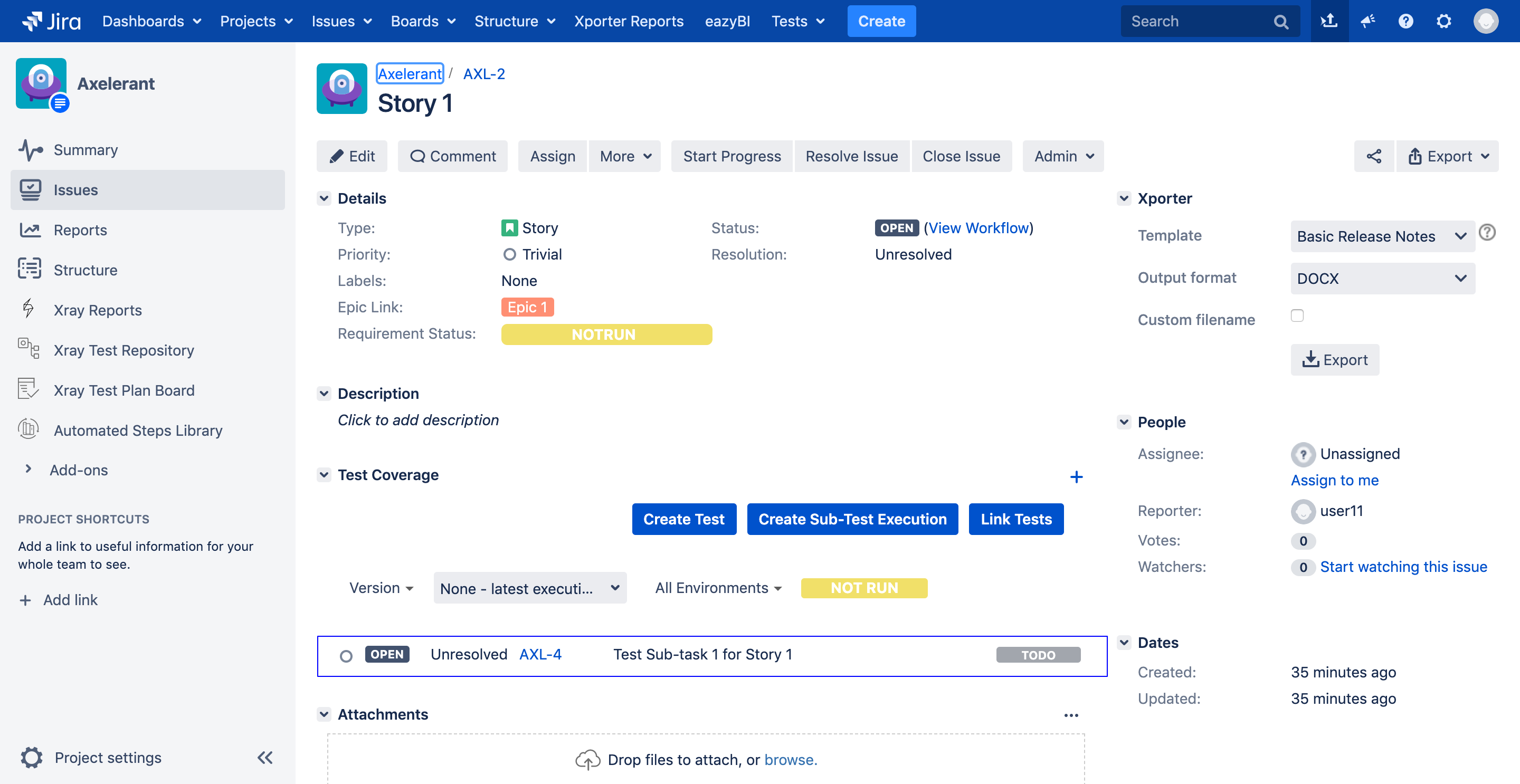

- When tests are written at the lowest level, they are associated with both stories and Epics of the sub-tasks. Hence, the requirement coverage status is automatically reflected in the parent issue types. The below images explain how a test created for a sub-task got associated with both the Epic and the Story.

- If the story has no sub-tasks, then continue writing tests at the story level.

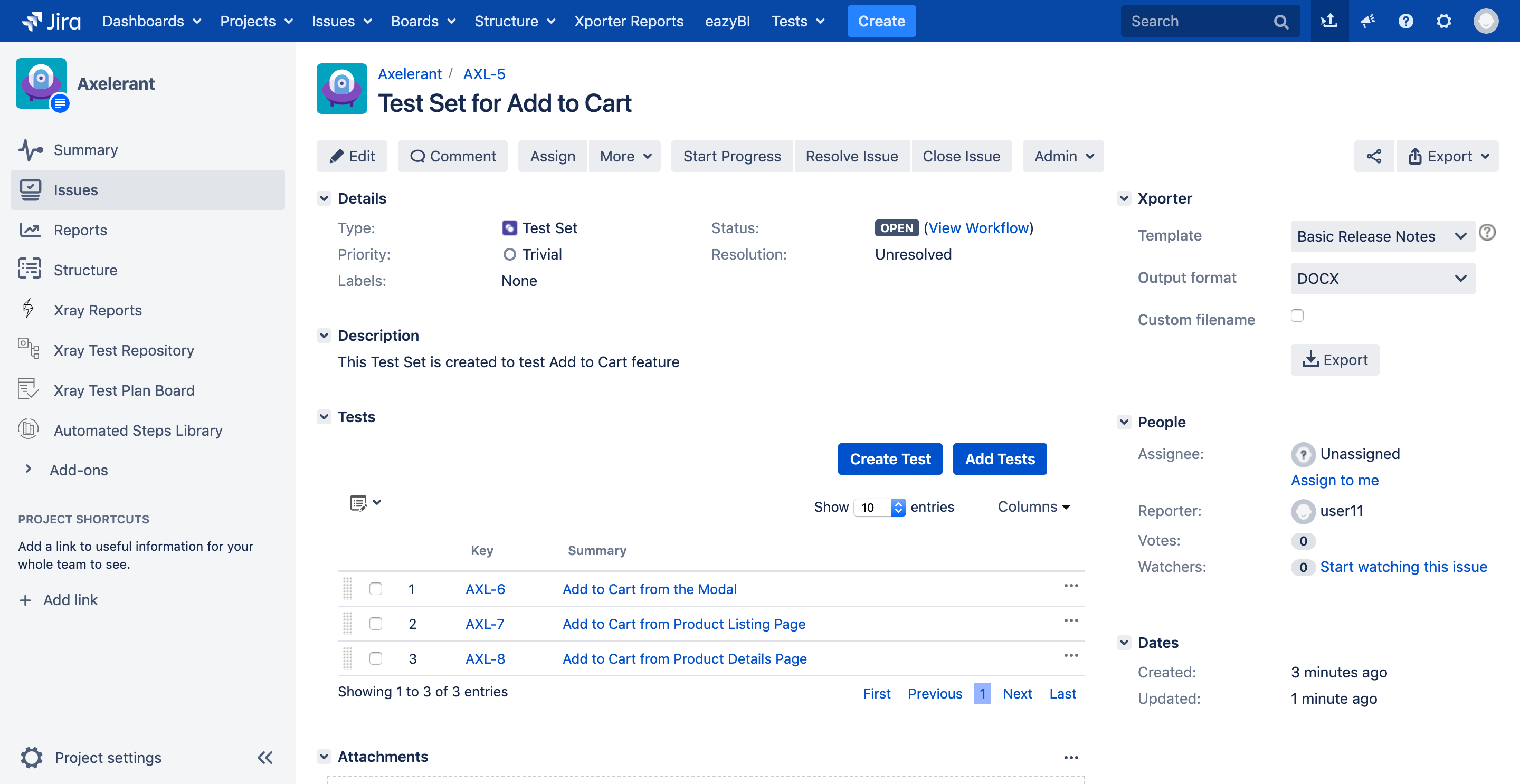

- After all the tests are written for a Story, identify the features and create Test Sets as per the feature. For example, Test Sets can be created for Checkout, Add to Cart, Payment methods, and so on. Your sample Test Set would look something like this.

- Please note that creating Test Sets is an optional step. However, I would personally recommend it to be used for identifying regression tests effectively, also add tests to a Test Plan or a Test execution in bulk instead of adding the tests one by one. Say, for instance, you have a Story to implement an Add to Cart feature. If you have a Test Set created which has all Add to Cart functionality related tests, then you can add that Test Set to the Story, and all tests would be associated with the Story. Please look at the screencast to understand how it can be done.

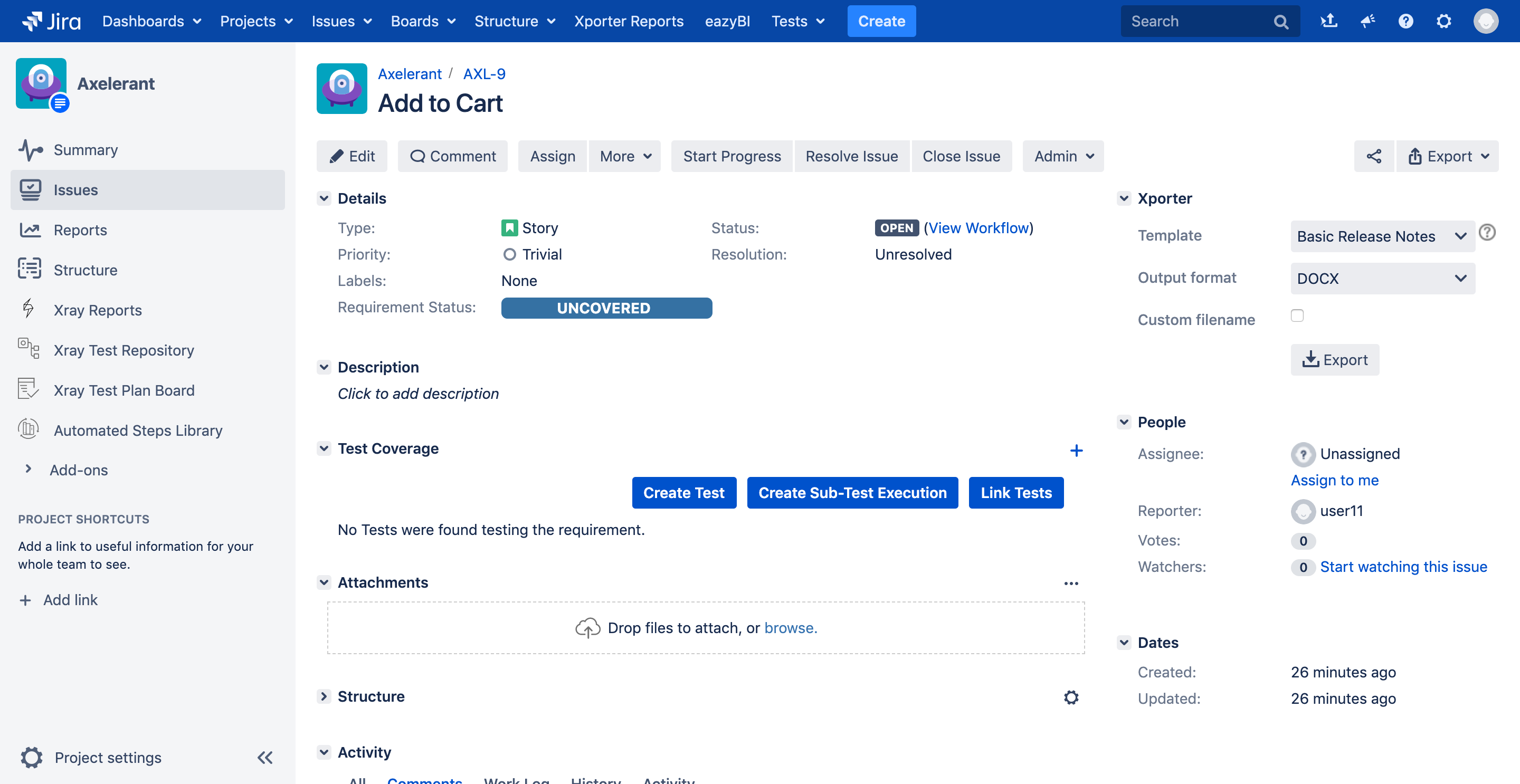

- After writing Tests/Test Sets, ensure they are associated with related stories using the Tests relation. Without establishing this relationship, we would not be able to calculate the Requirement coverage status for Epics/Stories/Sub-tasks. If the Tests/Test Sets are not associated with the Requirement issue types, then the Requirement Status field shows UNCOVERED, which means there are no tests either written or associated yet.

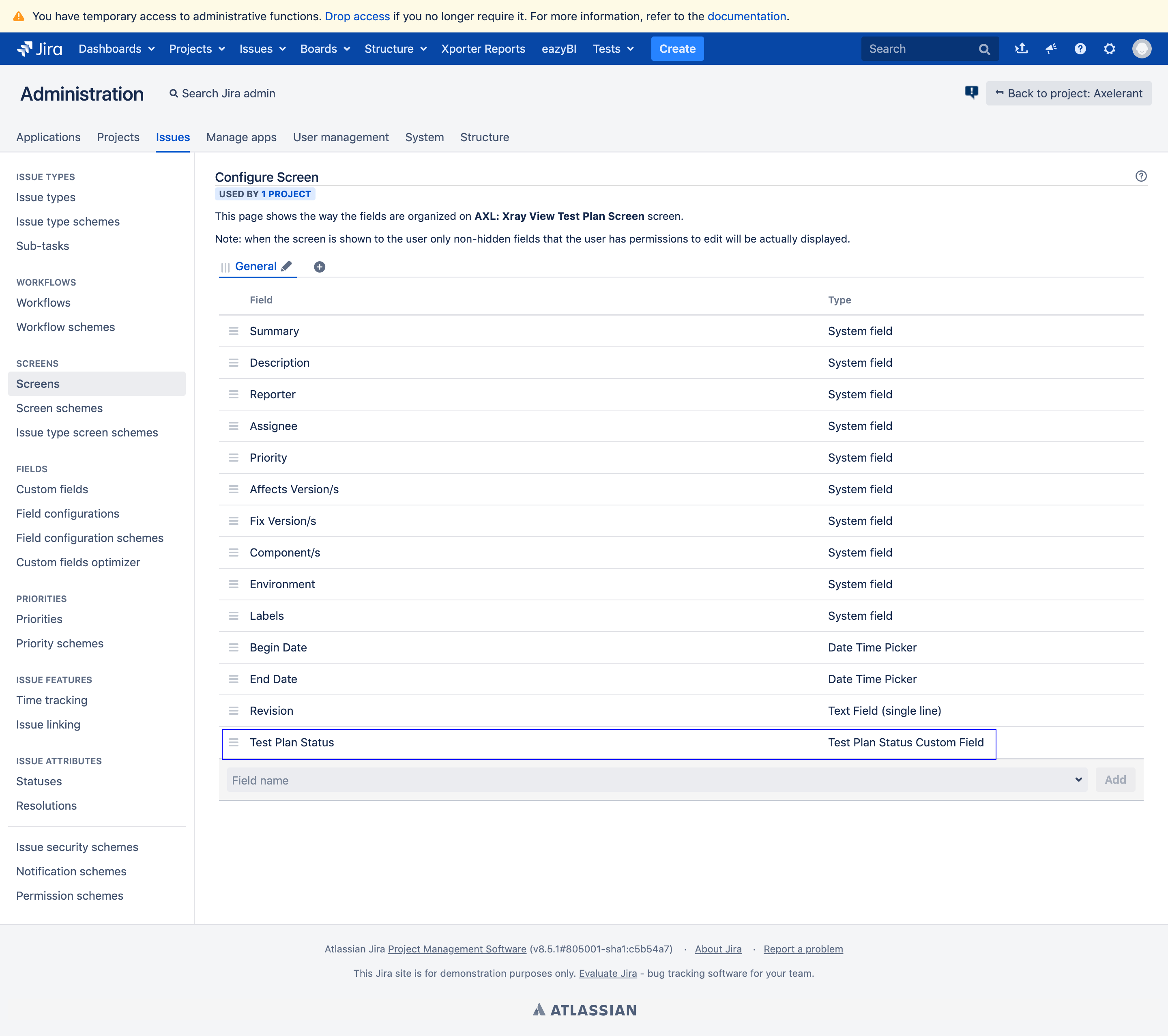

- The next step is to create a Test Plan per Sprint. Also, configure the custom field Test Plan Status field on the Test Plan view page to view the Sprint level progress at a glance. This is how the configuration would look like.

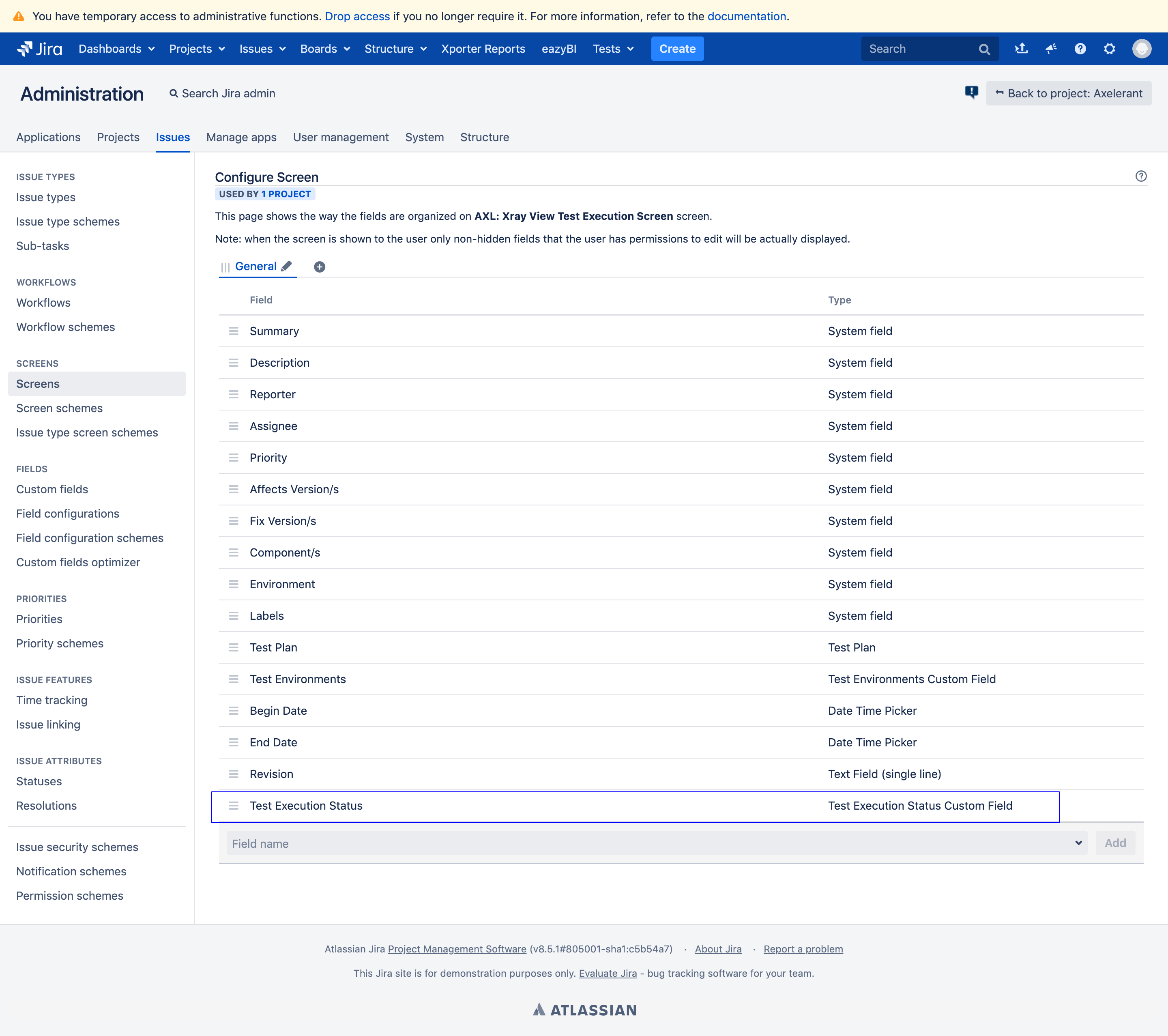

- Now, create a Test execution per Story in the current Sprint. Add all tests associated with the Story to the Test execution. Again, here if you have created a Test Set, you can directly associate it instead of linking individual stories. Let us configure one more custom field named Test Execution Status to track Test execution progress at Story level. This is how the configuration would look like.

- Add each Test execution to the Test Plan created in Step#6. For example, If your Sprint has five stories, then your Test Plan should consist of a minimum of 5 Test executions. All the executions associated with this Test Plan would contribute to the Overall execution status or the Test Plan Status.

To explain the entire process in a nutshell, please look at the image below.

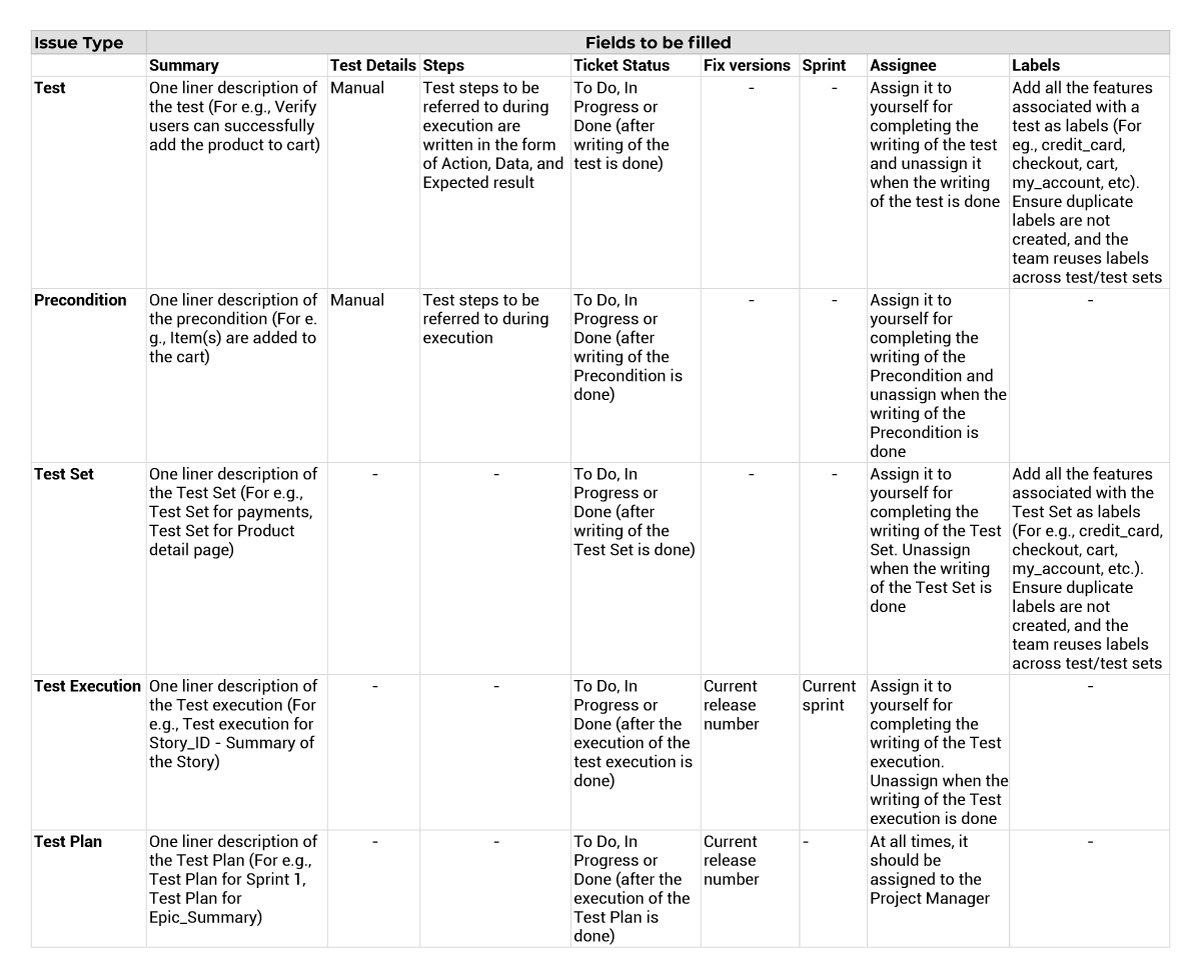

Also, please find the table below to know minimum fields to be filled for each Xray Issue Type for this structure to work effectively:

In the next blog, we will see how we utilized this structure to generate a meaningful and effective Jira Dashboard to track testing progress in the project.

Shweta Sharma, Director of Quality Engineering Services

When Shweta isn't at work, she's either on a family road trip across the country or she's dancing with her kids—it's a great combination.

We respect your privacy. Your information is safe.

We respect your privacy. Your information is safe.

Leave us a comment