The Problem: Insight Arrives Too Late

Most organizations today do not lack data. They operate in environments where events are generated continuously across transactions, customer interactions, operational systems, and digital platforms. What they lack is the ability to respond to those events as they unfold. Leaders do not need to be convinced that reacting hours or days later is ineffective; they see the consequences every day.

Fraud is identified after transactions settle. Abuse is discovered only after users are already affected. Customer behavior is analyzed after intent has passed. Operational issues are reviewed after SLA breaches. In each case, the pattern was evident as events unfolded, but the system responded too late to be effective.

The issue is not whether real-time detection is valuable. It is whether existing systems are capable of acting fast enough. Most are not. Detection logic typically sits downstream, tied to batch cycles, dashboards, investigations, or deployment-heavy pipelines. These systems explain outcomes well, but they intervene too late.

This accepted delay between signal and action directly translates into lost revenue, increased risk exposure, and reduced trust. That delay is the real problem.

That problem is decision latency.

And until it is addressed at the systems level, real-time pattern detection will remain a technical aspiration rather than an operational capability.

What Real-Time Pattern Detection Implies

Real-time pattern detection is not about faster reports or more frequently refreshed dashboards. It implies the ability to recognize meaningful behavior as events unfold and to respond before the next event occurs.

Most organizations already capture events as they happen: transactions, clicks, logins, system signals. The failure occurs after ingestion. Signals are interpreted only once data has settled into aggregates or reports, at which point decisions are retrospective by default.

Real-time pattern detection accelerates this timeline by focusing on identifying patterns such as sudden spikes, coordinated behaviors, deviations from expected baselines, or correlations with contextual data while the behavior is still unfolding, rather than after it has stabilized.

How this problem shows up across industries

The impact of delayed pattern detection becomes clearest when viewed through its direct business outcomes.

- Financial Services And Fintech: Data value has an immediate half-life. Detecting fraud a day later only explains the loss. The difference between stopping a transaction in milliseconds and flagging it after settlement is the difference between prevention and post-mortem.

- Gaming And Digital Communities: Coordinated abuse often emerges within minutes. Knowing that a group of accounts behaved suspiciously yesterday does little to protect today’s players. Detection must occur during play, before the behavior spreads and trust erodes.

- Retail And E-commerce: Customer intent decays fast. Knowing that a customer added an item to their cart or viewed a product yesterday is far less valuable than recognizing their intent while they are still in-session. Real-time pattern detection enables personalization, pricing, and offers to adapt in the moment, not after the opportunity has passed.

- Logistics And Supply Chain: Operational signals lose value quickly. Identifying a route deviation after a shipment is already delayed only explains the failure. Detecting the pattern early creates a narrow window to reroute or intervene.

- Marketing And Adtech: Spend decisions happen continuously. Identifying fraud or inefficiency after budgets are exhausted is too late. Real-time pattern detection allows anomalies to be caught while campaigns are still running, not after reporting closes.

Across industries, the pattern is consistent. When insight arrives late, value has already leaked. Real-time pattern detection shifts decisions from explanation to intervention.

Engineering A Dynamic Real-Time Pattern Detection Engine

Across multiple engagements, our data engineering team observed the same pattern: events were flowing in real time, but decisions were consistently delayed.

Detection logic lagged behind fast‑moving behavior. Even small rule changes took too long to deploy. Stateful pipelines were brittle to change, forcing teams to choose between speed and stability. Systems were good at explaining what happened, but struggled to intervene in real time.

To address this, our data engineering team set out to rethink how real‑time detection systems are built. The goal was clear: transform static, code‑heavy stream processing into a dynamic, configuration‑driven intelligence engine that could evolve as fast as the behavior it was meant to detect.

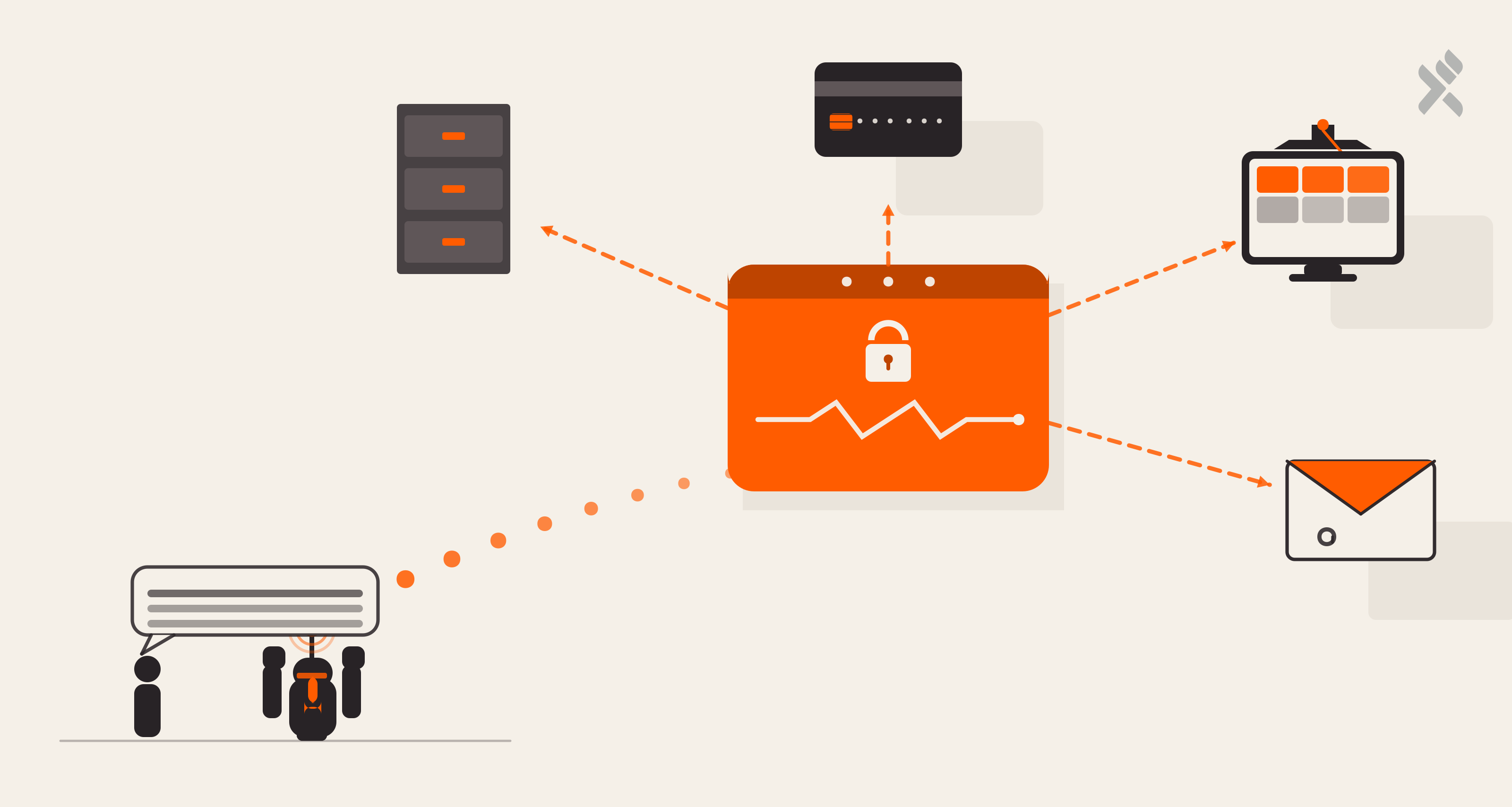

Rather than embedding logic directly into streaming jobs, the approach treated detection rules as data. Logic could be updated independently of the running system, without redeployments or state loss. This shift enabled support for more complex patterns, rapid adaptation to change, and the coordination of newly created or low-reputation accounts at millisecond‑level latency.

This work led to a focused proof of concept, designed to validate whether such an approach could hold up under real‑world conditions.

The PoC: Detecting Coordinated Behavior As It Happens

The Axelerant team chose a specific but broadly applicable scenario to validate the approach: detecting coordinated, inauthentic behavior in real time.

The scenario is often referred to as AstroTurfing. It describes situations in which newly created or low-reputation accounts coordinate to artificially amplify a topic or signal. While this example is common on social and content platforms, the underlying pattern appears in many domains, from fraud rings in financial systems to coordinated abuse in gaming environments.

AstroTurfing itself was not the goal. It was a convenient way to test whether a system could:

- Operate at millisecond-level latency

- Combine velocity and correlation patterns

- Adapt detection logic without redeploying stateful jobs

The proof of concept was implemented using Kafka as the event backbone and Apache Flink (via PyFlink) as the real-time processing engine. Activity events flowed through Kafka topics, while detection rules were published separately as configuration data. Flink evaluated these rules in memory, maintaining state to detect bursts of activity and correlate them with contextual signals such as account reputation.

The system identified high-velocity activity first, then correlated those events with contextual data. When both conditions were met, a detection signal was emitted immediately, while the behavior was still unfolding.

It showed that real-time pattern detection at a second scale is achievable, that multiple pattern types can be composed safely, and that detection logic can evolve without stopping the system.

What Comes Next: From Architecture To Adaptive Intelligence

In the next blogs, we will go deeper into the engineering side of real-time pattern detection.

We will break down how threshold patterns are implemented with dynamic rule updates, how velocity-based detection works with state and windows, and how correlation patterns combine multiple streams and contextual signals in real time.

But this is not a purely technical deep dive. Each of these components addresses a structural constraint that traditionally embeds latency into enterprise systems.

Threshold patterns, when dynamically configurable, eliminate the need for redeployments every time risk tolerances change. Velocity-based detection, powered by state and windowing, allows systems to reason about behavior over time instead of reacting to isolated events. Correlation patterns that merge multiple streams with contextual data shift detection from single-signal alerting to multi-dimensional intelligence.

Individually, these capabilities are powerful. Together, they form a cohesive detection framework designed to operate in motion, not in hindsight.

This series will unpack how these patterns are engineered safely at scale, how rule evolution can occur without destabilizing stateful pipelines, and how real-time systems can remain both adaptive and resilient under production load. Because solving decision latency does not refer to adding another layer of analytics. It is about rethinking how systems reason, evolve, and intervene as events unfold.

When detection logic becomes dynamic, state becomes strategic, and correlation becomes contextual, organizations move beyond retrospective explanation. They begin to act with precision in the present.

That is the practical foundation for operating on data while it is still alive rather than studying it after value has already decayed.

Prateek Jain, Director of Digital Solutions & AI Strategy

Offline, if he's not spending time with his daughter he's either on the field playing cricket or in a chair with a good book.

Qais Qadri, Senior Software Engineer

At his core, Qais values honesty and patience. He works with clarity and intention. He leads when needed. He supports without hesitation. Life, for him, is simple—good friends, long drives, meaningful books, and constant self-improvement.

We respect your privacy. Your information is safe.

We respect your privacy. Your information is safe.

Leave us a comment