The most common mistake in data architecture conversations is treating Snowflake and Salesforce Data Cloud as alternatives. They solve different problems. Here's the integration layer that makes both worth having.

The Question That Surfaces in Every Multi-Cloud Engagement

At some point in every Salesforce transformation that involves significant data infrastructure, someone asks a version of this question: "We already have Snowflake — do we still need Data Cloud?"

The framing implies a trade-off. It isn't one.

Snowflake and Salesforce Data Cloud are not alternative solutions to the same problem. They serve fundamentally different architectural purposes, operate at different latencies, and produce different kinds of value. Choosing one over the other isn't a data architecture decision — it's a misunderstanding of what each platform actually does.

During a multi-cloud Salesforce engagement with a global sports and lifestyle brand, this exact question had to be answered explicitly. The client was already in the process of migrating from an S3 data lake to Snowflake as their analytical foundation — a significant infrastructure investment managed by a separate data partner. Simultaneously, the Salesforce implementation scope included Data Cloud as the CDP and activation layer.

The architectural principle we established early: Snowflake is the analytical depth layer. Data Cloud is the real-time activation engine. They're not interchangeable. They're complementary. Getting the integration between them right is what makes both worth having.

What Snowflake Does — and What It Doesn't

Snowflake is a cloud data warehouse. Its core job is storing large volumes of structured and semi-structured data in a way that supports complex analytical queries at scale. It excels at:

Historical depth. Snowflake can hold years of behavioral events, transactional records, product interactions, and operational data at a granularity that no CRM or CDP can match. A query against billions of rows of behavioral history — segmenting customers by 24-month engagement patterns, calculating lifetime value across product lines, identifying cohort-level churn curves — runs well in Snowflake. It does not run in real time in a CDP.

Complex analytics and data science. Data scientists building propensity models, churn prediction algorithms, or CLV scoring models work in Snowflake. The compute environment, the SQL interface, the integration with Python ML frameworks — all of this is native to the warehouse. Data Cloud can consume the outputs of these models. It cannot replace the environment in which they are built.

Data governance and auditability. Snowflake's access controls, data sharing, time-travel capabilities, and query history make it the right layer for governed, auditable data — the kind required for financial reporting, regulatory compliance, or cross-team data sharing agreements.

Cross-source join capability. Joining customer behavioral data from a web analytics platform, transaction records from a commerce system, LMS completion events, and CRM interactions in a single query requires a data warehouse. Snowflake handles this at scale. Data Cloud handles it at the profile level, not the analytical query level.

What Snowflake does not do: it does not push data to downstream systems in real time. It does not maintain a living, continuously-updated customer profile. It does not have native connectors to Marketing Cloud journeys or Sales Cloud workflows. And it does not resolve identity across source systems — it stores data, it does not stitch it.

What Data Cloud Does — and Why That's Different

Salesforce Data Cloud — the CDP layer — is not a data warehouse. It is an activation engine built on a real-time identity graph. Its core jobs are:

Identity resolution in motion. Data Cloud continuously ingests events from connected source systems and resolves them to unified customer profiles using deterministic and probabilistic matching. When a customer completes a course, that event arrives in Data Cloud within minutes and updates their unified profile. When they make a purchase, their commerce history is reflected in their profile before the next Marketing Cloud send fires. This is latency-sensitive work that a batch-loaded warehouse cannot do.

Segment computation and activation. Data Cloud computes segments — "members who completed certification in the last 30 days and have never booked travel" — against live unified profiles, not against a static data extract. Those segments push directly to Marketing Cloud, Sales Cloud, or advertising platforms without an intermediate ETL step.

Calculated insights at the profile level. Engagement scores, churn risk indicators, next-best-action signals — these are computed in Data Cloud against each individual's unified profile and surfaced to downstream systems in real time. An account manager opening a CRM record sees a churn risk score that reflects the customer's behavior from the last 48 hours, not the last data warehouse export.

Triggering downstream workflows. A certification completion event in Data Cloud can trigger a Marketing Cloud journey entry, a Sales Cloud task creation, and an Experience Cloud notification — simultaneously, within minutes of the event occurring. This event-driven architecture is what makes real-time personalization technically possible.

What Data Cloud does not do: it does not hold years of behavioral history at analytical depth. It does not run complex cohort queries across billions of events. It does not serve as the environment where data scientists build models. And it is not the right place to store governed, auditable raw data.

The Integration Architecture

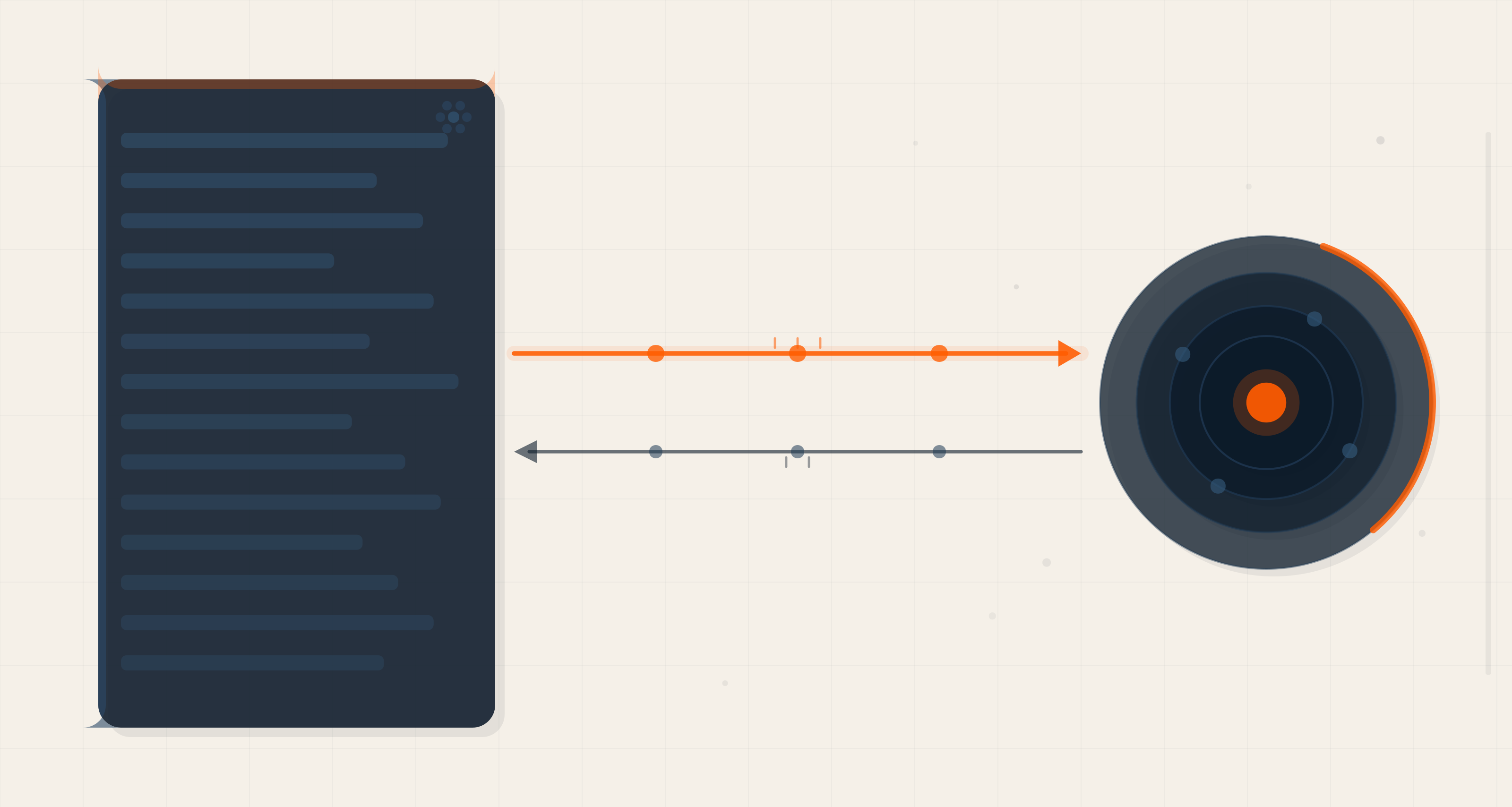

Understanding the distinction leads directly to the integration design: Snowflake and Data Cloud need to exchange data in both directions, at different cadences, for different purposes.

Direction 1: Snowflake → Data Cloud (Historical Seeding and Model Outputs)

The primary flow from Snowflake to Data Cloud serves two purposes:

Historical profile seeding. When Data Cloud is first deployed, unified profiles need to be populated with historical data — years of behavioral events, transaction history, and engagement records that predated the CDP implementation. This data lives in Snowflake. A one-time or periodic bulk transfer seeds the Data Cloud identity graph with this history, giving newly-unified profiles the context they need to support segmentation and scoring from day one rather than requiring months of live data accumulation.

Predictive model outputs. Models built in Snowflake — churn scores, CLV estimates, propensity scores — can be written back to Snowflake as calculated fields and then pushed to Data Cloud as profile attributes. This means the sophistication of warehouse-scale data science shows up in real-time activation decisions. A Marketing Cloud journey can reference a churn score computed against 18 months of behavioral history, even though Data Cloud itself only holds a real-time profile window.

The technical pattern: scheduled or event-triggered exports from Snowflake to Cloud Storage (S3 or GCS), then ingested into Data Cloud via a data stream connector. For model outputs specifically, the export can be incremental — only updated scores — to minimize transfer volume.

Direction 2: Data Cloud → Snowflake (Enriched Profiles and Event Streams)

Data Cloud should also be writing back to Snowflake, specifically:

Unified identity mappings. The identity resolution work Data Cloud performs — linking a CRM contact ID to an LMS learner ID, a commerce customer ID, and a mobile device ID into a single golden profile — is valuable analytical data. Exporting those mappings to Snowflake means downstream analytical queries can join across source systems using resolved identity rather than raw identifiers. This is a significant analytical capability upgrade.

Engagement event streams. Data Cloud captures behavioral events as they flow through the activation layer — journey entries, email sends, purchase events triggered by CDP segments. Writing these events back to Snowflake creates an auditable record of activation-layer activity that can be included in full-funnel analytics and attribution models.

Segment membership history. Which customers were in which segments, at what time, is valuable historical data. Segment snapshots from Data Cloud written to Snowflake enable cohort analysis against activation-layer segmentation — understanding, for example, whether customers who were in the "churn risk" segment six months ago and received the reactivation journey are still active today.

The Sequencing Dependency

One of the most important architectural decisions in this client engagement was the explicit sequencing of the Snowflake and Data Cloud work streams.

The data infrastructure partner was handling the Snowflake migration — moving from an S3 data lake to a structured data warehouse — in parallel with the Salesforce implementation. This wasn't arbitrary scheduling. It was a sequencing dependency:

CDP activation quality depends on the quality of the data it ingests. Data Cloud's identity resolution and calculated insights are only as reliable as the behavioral history and dimensional data that seeds them. If Snowflake is still being migrated — if the data hasn't been cleaned, modeled, and made queryable — the historical seeding step cannot happen. Data Cloud profiles will be thin, segmentation will be imprecise, and model outputs will be unreliable.

The practical implication for implementation planning: don't scope your Data Cloud activation milestones as if Snowflake is already done when it isn't. The activation timeline has to account for the warehouse readiness milestone. A CDP that launches before its historical data source is ready produces a degraded experience from day one — and first impressions of activation quality are hard to reset.

In this engagement, the explicit callout in the sequencing recommendation was: Snowflake implementation (handled by the data infrastructure partner) is a prerequisite for full CDP activation, not a parallel workstream that can slip without consequence.

The GA4 Consideration

One data source that appeared in the architecture review and deserves specific attention is GA4 — Google Analytics 4.

GA4 does not provide historical behavioral data via its APIs. The way to capture historical GA4 data is to enable BigQuery export, which starts accumulating data from the moment it's configured. Once BigQuery export is enabled, GA4 event data flows daily into BigQuery, from where it can be exported to Snowflake via a pipeline (BigQuery → S3 → Snowpipe → Snowflake is one common pattern).

The implication: if GA4 behavioral data is a meaningful input to Data Cloud segmentation or identity resolution — and for most B2C brands with significant web traffic, it should be — the BigQuery export needs to be configured well before the CDP implementation begins. If it's not configured until CDP launch, you have no historical web behavioral data to seed profiles with. You are starting from zero on a data source that could have months of history if the pipeline had been set up earlier.

This is one of those architectural decisions that costs nothing to make correctly and is expensive to correct retroactively. Configure BigQuery export. Build the Snowflake pipeline. Let the data accumulate. By the time CDP implementation begins, you have a meaningful behavioral dataset to work with.

The Governance Question

One aspect of the Snowflake–Data Cloud architecture that is frequently underestimated is data governance across the boundary between the two systems.

Data in Snowflake is governed by whatever access controls, data contracts, and retention policies are in place in the warehouse. When that data crosses into Data Cloud, different governance rules apply — Salesforce's data classification system, consent management framework, and retention settings.

This creates three specific governance requirements:

Consent alignment. If a customer has opted out of marketing communications, that preference needs to be respected in both Snowflake analytics and Data Cloud activation. The consent status that lives in the CRM or a consent management platform needs to propagate to both systems. A customer suppressed in Salesforce who is still included in a Snowflake export that seeds a Data Cloud segment can end up receiving a communication they opted out of. Consent propagation across the full data architecture is non-optional.

PII handling. Not all data that can live in Snowflake should live in Data Cloud. Raw PII — full names, phone numbers, payment details — needs to be evaluated against what Data Cloud actually needs for identity resolution and activation. The principle is minimum necessary data: use hashed identifiers where possible, and keep sensitive PII in Snowflake's governed environment rather than duplicating it into the activation layer.

Retention alignment. Snowflake can hold data indefinitely with time-travel capabilities. Data Cloud has configurable retention windows that affect profile history. These retention policies need to be defined consistently with the business's regulatory requirements — particularly for customers in regions with data minimization obligations — and enforced at both layers.

The Principle: Two Layers, One Architecture

The organizations that get the most value from both Snowflake and Salesforce Data Cloud are the ones that treat them as layers of a single architecture rather than competing investments in separate architectures.

Snowflake provides the depth — the ability to store and analyze years of behavioral history at the granularity needed for complex data science. Data Cloud provides the currency — the ability to maintain living, real-time profiles and push activation decisions into downstream systems within minutes of an event occurring.

Neither layer is complete without the other. Snowflake without Data Cloud is an analytical asset that doesn't drive real-time customer experience. Data Cloud without Snowflake is an activation engine without historical depth, producing profiles that are current but thin, and models that are fast but underinformed.

The integration between them — bidirectional, well-governed, sequenced correctly — is what converts two investments into a compounding architecture.

Read Next

The Data Cloud layer that Snowflake feeds is only as valuable as the identity resolution it's built on. If customer identities are fragmented across source systems before the data reaches Data Cloud, the analytics in Snowflake and the activation in Data Cloud will both operate on incomplete pictures.

→ The Identity Problem Your CRM Cannot Solve (And Why a CDP Is the Only Answer)

Axelerant works with enterprises on Salesforce Data Cloud implementation, data architecture strategy, and multi-cloud transformation. If your team is navigating the Snowflake–Salesforce integration design, we'd be glad to talk through how we approach it.

Bassam Ismail, Director of Digital Engineering

Away from work, he likes cooking with his wife, reading comic strips, or playing around with programming languages for fun.

We respect your privacy. Your information is safe.

We respect your privacy. Your information is safe.

Leave us a comment