Introduction

This story does not start with a strategy. It starts with me getting tired of a problem I had with myself.

Going through Slack takes up a meaningful chunk of my day. There are too many threads, too many small commitments I made yesterday and forgot to circle back on, too many quiet windows where a thirty-second nudge from me would have saved a week of follow-up later. I am not bad at any of this. I am busy. The work compounds.

So I built something for myself. Not for the company. For me.

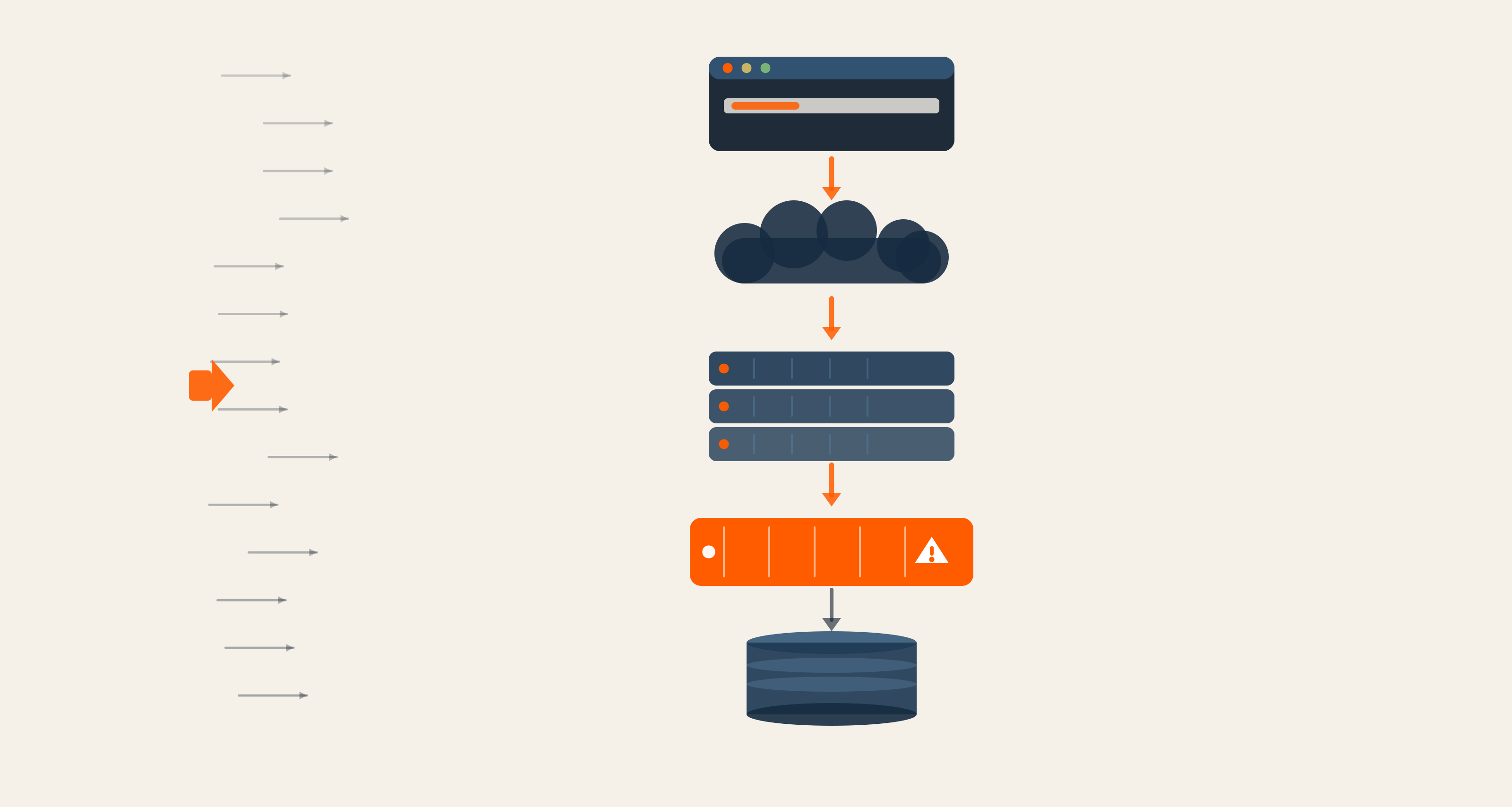

I connected Claude to my personal knowledge-base project, a tool I run on top of qmd, plus my work tools (Slack, Jira, and a few others). I gave the model a narrow brief. Scan for situations where action is expected of me, but I have not taken it, or where someone else's input is needed but has not come through. Decide whether to act or to flag it for me. Act, within a list of constraints I wrote down myself.

Three steps. Scan. Decide. Act.

The rest of the team did not know any of this until one morning when I posted a screenshot of the agent's daily activity log into a working group channel. I had been on vacation for a week. The agent had been quietly working in my absence. Two slow opportunity threads had been gently nudged forward. A third had been flagged for me to review when I was back. The team's reaction was not what I expected.

The first reaction was not "wow, cool." It was a long pause, and then "wait, hold on a second."

That pause is the entire reason I am writing this.

What I Actually Built

It is worth being precise about what this is and what it is not.

It is not AXEL. AXEL is our internal AI assistant, embedded across team workflows, owned and operated as a company asset. What I engineered is something different. It is a personal agent on my personal stack. It runs for one person. It reports to one person. It commits nothing. It deletes nothing. It nudges, and it logs.

The architecture is small and clear. My knowledge-base project, built on qmd, gives the model a searchable graph of everything I have ingested into it over time. The work integrations give it read-and-act access to Slack and Jira. A short policy file written in plain English defines the bounds. Scan a fixed set of channels and threads. Apply a fixed set of conditions. Take a fixed set of actions. Anything outside those bounds, escalate to me.

The output is a daily digest message I get every morning. A real sample from one day included three sections. Threads where no action was needed, with reasoning. Threads where a nudge had been sent, with the nudge text included. Threads flagged for me to handle directly, because the reasoning chain produced a "this needs a human" verdict.

The agent does not respond to questions on my behalf. It does not write code. It does not push commits. It moves slow conversations a small step forward, and it tells me exactly what it did.

That last part, the daily log, is the design decision that made the rest possible.

Why The Team Got Nervous

When the screenshot landed in the channel, the immediate response was not enthusiastic. It was scrutiny.

-

Why did it pick those threads?

-

Why not the others?

-

What if it picks the wrong one tomorrow?

-

What does the policy actually say?

-

What happens if a model update changes how it interprets a thread?

-

What if it nudges a customer on a thread it should not have?

These are the right questions. The fact that the team asked them out loud, at the same time, in the same channel, told me something I want to write down.

A model that solves a hard problem today gets framed as a win. A model that confidently does the wrong thing tomorrow gets framed as the model's fault. Both framings are wrong. The right framing is that the agent is only as useful as the system around it. If the policy is fuzzy, the agent is fuzzy. If the kill switch is missing, the agent is dangerous. If nobody can read the daily log and understand why a decision was made, the agent is opaque.

I was able to answer most of those questions because I had done the work first. The policy was written down. The reasoning trace was visible in the daily log. The list of allowed actions was explicit. The kill switch was a single config flip.

If I had not done that work, the agent would have been turned off the same day the screenshot landed. Probably for good. And honestly, that would have been the right call.

The Boring Part That Made This Possible

The screenshot is the visible part. It is also the smallest part of the work.

The much larger part came before. I spent time deciding what "going cold" means before I ever connected the model to Slack. I spent more time deciding what an "appropriate nudge" looks like, in language a human reading the agent's logs could verify. I spent time defining what counts as a follow-up and what counts as a fresh question that requires me to answer. Two phrases that sound obvious until you ask three people to define them and get three answers.

I also wrote down what the agent is allowed to do, in language another engineer could read and disagree with. I wrote down the rollback path. I wrote down what would have to be true for the agent to be turned off entirely, and how I would notice that it should be.

None of this is glamorous. All of it made the team's pause a calm one rather than a panicked one.

This is what I keep telling myself about agents in general. The agent is the visible part. The system around the agent is the actual product. Skip the system, and you do not have an agent. You have a confident bot making noise in the wrong direction.

I have seen that movie elsewhere. It does not end well.

What This Says About Where We Go Next

Watching this experiment land has changed how I am thinking about agents inside the company, and how the team is too.

We are not in a hurry to ship "AXEL nudges your Slack threads" as a team-wide feature. AXEL is a different system shape. It serves teams, not individuals. The governance bar for any agent that touches multiple people's work is much higher than for an agent that touches one person's work. We will get there. We will get there by building the same kind of policy, logging, and kill switch I wrote, just at a different scale.

In the meantime, I am encouraging more people on the team to try the pattern for themselves. Scan, decide, act, with a daily log and a policy a human can read. Within the same kind of guardrails I wrote first.

What interests me is not that I built an agent. The interesting thing is that the team's reaction to seeing it work was the right reaction. Skepticism. Questions. A demand for trace and policy. That collective instinct protects the rest of the company from shipping the wrong thing too quickly. I am glad I work somewhere that the first response to a working agent is "wait, hold on a second."

If you are thinking about giving an agent room to act on your behalf, we are happy to think through the governance with you. The boring stuff is the actual product.

Swarad Mokal, Technical Program Manager

Big time Manchester United fan, avid gamer, web series binge watcher, and handy auto mechanic.

We respect your privacy. Your information is safe.

We respect your privacy. Your information is safe.

Leave us a comment