Introduction

A real story about how AI shortened a diagnosis from twelve months of avoidance to one afternoon of looking, and what made that possible.

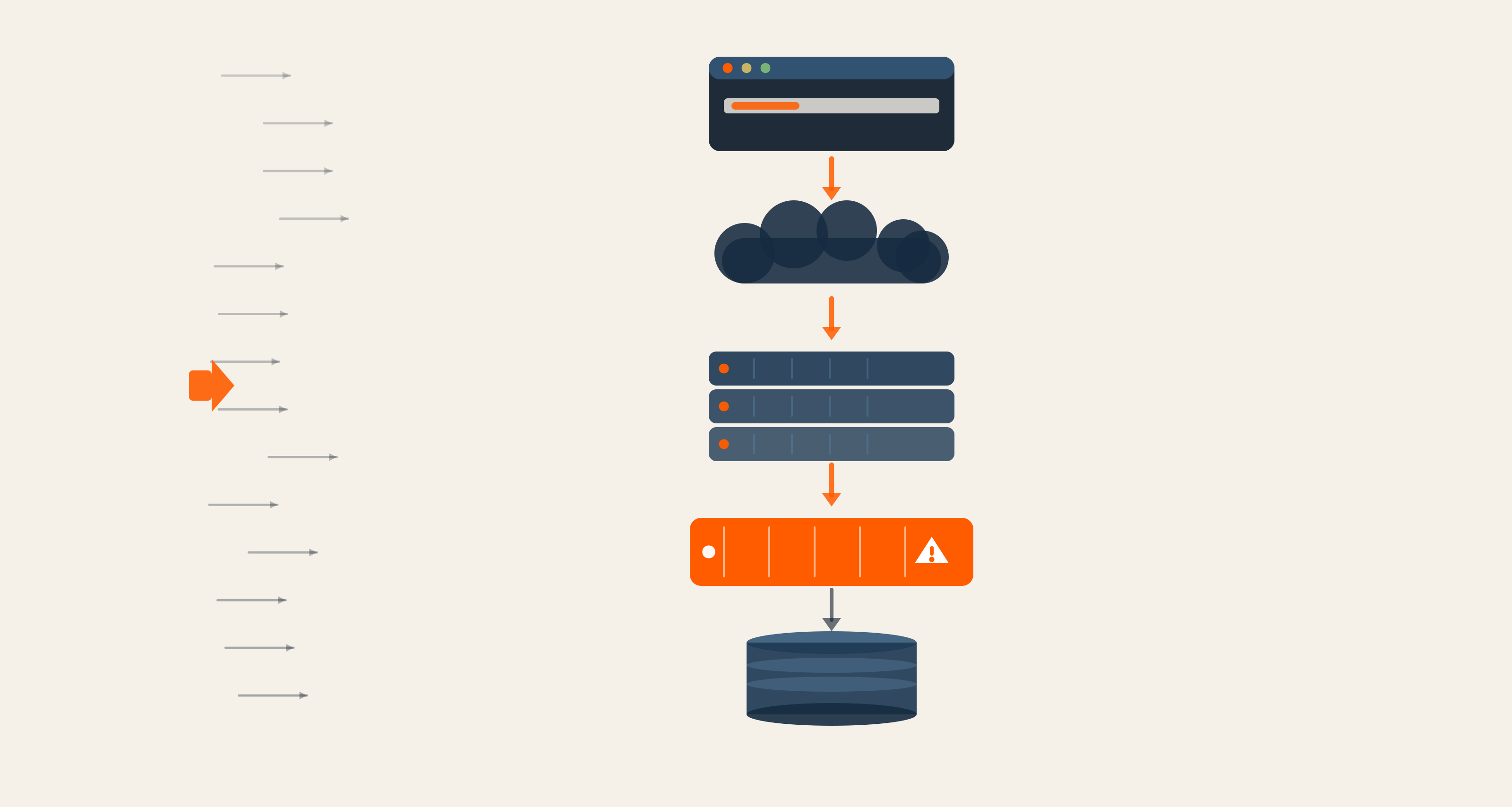

It was a five-layer caching stack that nobody had looked at properly in over a year.

Cloudflare. Varnish. Page cache. Dynamic cache. Drupal core. Every layer added by someone with a perfectly good reason at the time. None of them ever revisited together.

The site was hitting origin 466,000 times a day. That number was supposed to be much smaller. Everyone assumed at least one of the layers above was doing its job. Five separate teams had touched these layers over the last twelve months. Nobody owned the stack as a whole.

When we finally sat down to investigate, the diagnosis took an afternoon.

By the end of week two, origin requests had dropped to 21,000 a day. A 95% reduction. The traffic itself had not changed at all.

This is a post about that diagnosis. More specifically, it is a post about what AI actually contributed and what it did not. Because every part of the fix was done by humans. The only thing AI did was make it impossible to keep avoiding.

What We Walked Into

There was no single broken thing. That was the problem.

The site had been performing acceptably on the surface metrics. P95 was within tolerance. Cache hit rates, when you looked at them per layer in isolation, looked fine. Cloudflare reported a healthy hit ratio. Varnish reported a healthy hit ratio. Drupal's internal page cache reported a healthy hit ratio.

But aggregate origin traffic was high enough that the team had been quietly upsizing infrastructure to handle it. The cost line had been moving up steadily for months. Nobody had escalated it because the platform was not failing. It was just expensive in a way that did not look obvious from any single dashboard.

This is the worst kind of operational debt. There is no incident. There is no postmortem. There is just a slowly compounding bill that everyone has learned to look past.

Here is what was actually happening, only nobody had pieced it together yet. Cloudflare was caching aggressively. Varnish behind it was caching aggressively. The Drupal page cache was caching aggressively. Each layer was working. Each layer was ignoring the same problem.

A bug deep in Drupal core was emitting a Vary: Cookie header on responses that had no cookie dependency. That single header meant every cache layer above it had to treat each authenticated session as a unique cache entry. Hit rates looked fine because most variants did get reused within a session. But every new session created a fresh cache miss chain all the way to the origin.

The bug had been there for over a year. It had been there long enough that the request volume to the origin had become someone's normal.

How A Diagnosis That Should Have Taken A Sprint Took An Afternoon

The reason this kind of issue stays hidden is not that it is hard to find. It is that finding it requires holding five layers of cache behavior in your head simultaneously and asking why they collectively are not doing what each reports doing individually.

That is exactly the kind of problem AI is good at.

We sat down with Claude and walked it through every layer. The headers each one was emitting. The TTLs each were honoring. The conditions each was using to decide whether to serve a cached response. They did this in a single conversation. The model did not know the answer. Nobody did. But it could hold the entire picture at once and ask the questions that a human, distracted by everything else on their desk, would not.

Within an hour, the conversation had narrowed to a hypothesis: there was a Vary header coming from somewhere unexpected. Within another hour, they had isolated it to a function in the core that almost no one touches because almost no one reads it. By the end of the afternoon, they had a patch.

The patch was small. Removing the offending header restored normal cache behavior at every layer above it. The fix shipped the next day. Within forty-eight hours, origin traffic had collapsed.

It is tempting to call this an AI win. It is more accurate to call it an AI-assisted attention win.

The patch had to be reviewed. The behavior change had to be validated against authenticated user flows, so we did not break personalization downstream. Cache invalidation had to be coordinated across layers so we did not serve stale content. None of that work was done by a model. All of it was done by a human team that had been waiting for a reason to look at this carefully.

What Actually Changed

The numbers on this one are easy to state and easy to verify.

-

Before: 466,000 origin requests per day, with the count slowly rising as traffic grew. Behind that, infrastructure that had been quietly scaled up over the year to absorb the load.

-

After: 21,000 origin requests per day. A 95% drop. Cache hit ratios at the edge improved. Time to first byte improved for cold-session requests because the chain no longer went all the way back. Infrastructure costs are now a line item the team is comfortable defending.

The work also surfaced something more useful than the number. We learned which of the five layers were actually adding value once the bug was fixed. Two of them turned out to be doing very little. A future cleanup round will likely further simplify the stack.

This is a second-order effect that does not appear on any single dashboard. Once you fix the thing that has been masking the truth, you find out what is actually load-bearing.

What This Says About Where AI Helps

We are wary of stories that frame AI as the protagonist. They tend to age badly. A model that solved a hard problem today often gets the credit for a solution that the team had already half-figured out, and does not get blamed when it confidently proposes the wrong fix tomorrow.

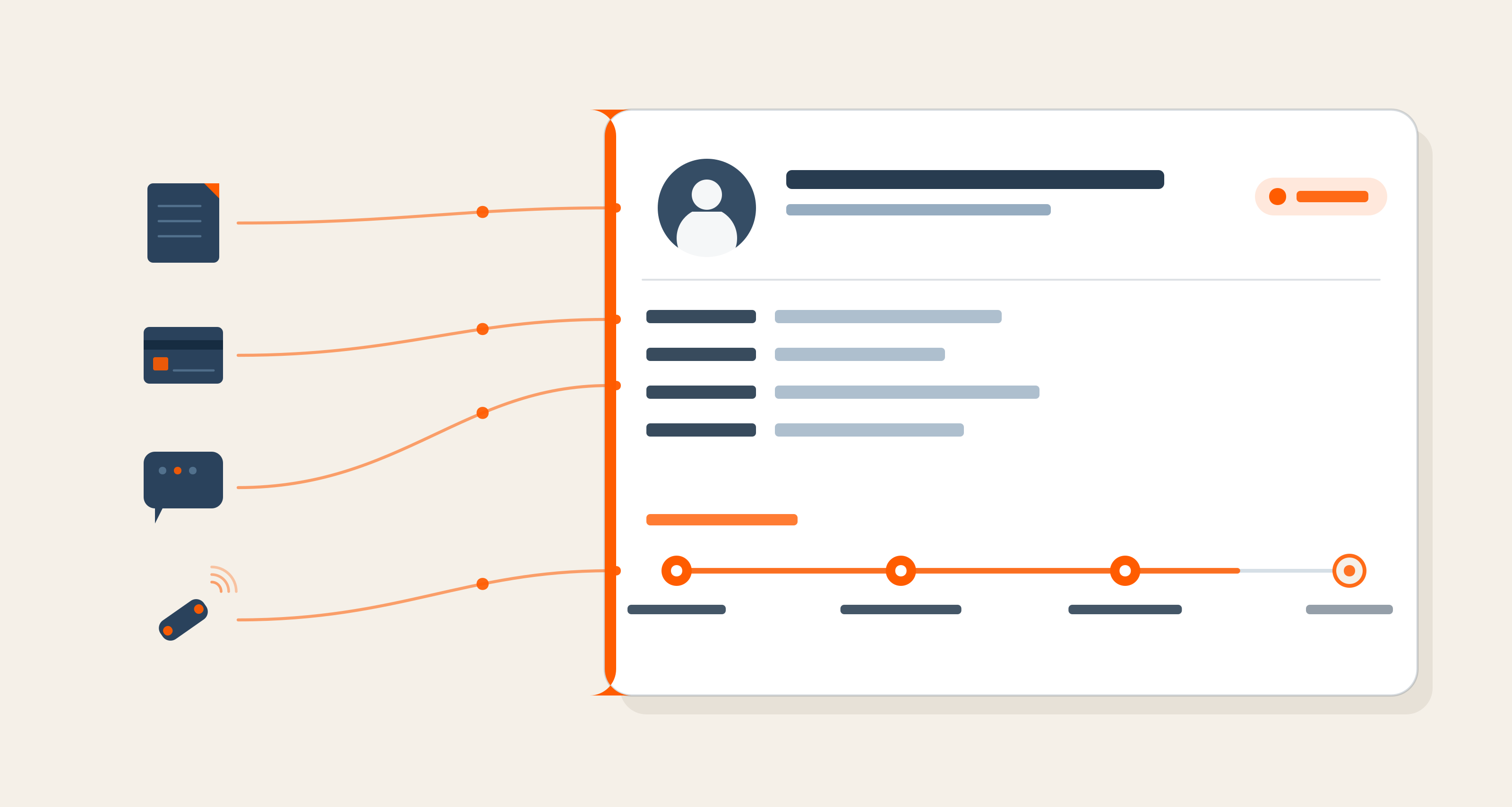

What we believe instead, after watching this and other similar diagnoses play out: AI is most useful when the boring engineering work has already been done. In this case, that meant a team that had carefully instrumented every cache layer over the years. Logs were collectable. Headers were inspectable. Cache behavior was reproducible. None of that is glamorous. None of it gets a write-up. But without it, no model in the world would have found the bug, because there would have been nothing reliable for it to look at.

The layers had to be visible before they could be diagnosed. The diagnosis happened to be fast. The visibility took years.

If your stack does not have that visibility yet, it is worth investing in before you reach for a model. The model accelerates the part of the work that comes after observability is solid. It does not make up for its absence.

What's Next

We are now turning the same attention to a few other long-standing performance debts on this platform. None of them are caching. All of them have the same shape: layers of well-intentioned changes that have not been audited against each other in some time, currently masking each other's behavior.

The diagnosis pattern is the same. Hold all the layers in view. Ask why the whole behaves differently than the parts. Validate with the team that knows the system.

If you are looking at a cost line that has crept up on you the same way ours did, we'd be happy to think it through with you. Most of the time, the answer is sitting somewhere in your stack. You just need a way to see it all at once.

Kalaiselvan Swamy, Technical Program Manager

A spiritual at heart, Kalai never forgets that life is a gift. Also a hollywood movie buff and an ambivert, when not at work, you will find him spending time with his son.

We respect your privacy. Your information is safe.

We respect your privacy. Your information is safe.

Leave us a comment