Introduction

Part 2 of the series: "Building a Production EdTech Platform on Lovable"

In Part 1 of this series, we built the foundation: a full-stack EdTech platform scaffolded entirely through AI-assisted development. This post picks up where that left off.

Every visitor should have instant access to a knowledgeable advisor, someone who knows every program, every price, every outcome, and can guide them toward the right choice. Available 24/7, without hiring a sales team.

Two AI agents were built:

- A text chatbot, embedded on every page, powered by a self-refreshing knowledge base

- A voice agent, a natural-sounding AI consultant you can actually talk to, with real-time transcription and automatic CRM sync

Both were built entirely in Lovable, frontend components, backend edge functions, database tables, and external API integrations. Using AI to build AI, which turned out to be the most natural thing in the world.

Building AI Features Through AI Conversations

Before diving into the architecture, it's worth reflecting on what it means to build an AI sales assistant using an AI development platform.

The chatbot's streaming SSE implementation, the voice agent's WebRTC integration, the regex-based lead detection, and the non-blocking CRM sync were all described in natural language and implemented by Lovable. The prompts weren't "write me a ReadableStream parser." They were: "The chat response should appear word by word like ChatGPT, streaming from the edge function."

Lovable understood the intent and produced the correct SSE parsing logic, the progressive state updates, and the auto-scroll behavior. For the voice agent, the prompt was: "After the call ends, use AI to extract any contact details the person mentioned during the conversation, then push them to the CRM." Lovable wrote the Gemini Flash Lite extraction prompt, the JSON parsing, and the CRM API call.

This is where AI-assisted development feels like a superpower, building complex AI systems by describing what they should do, not how they should work.

The Conversational AI System At A Glance

The chatbot isn't a simple prompt-and-respond wrapper. It's a multi-layer system:

- Knowledge-grounded: Every response draws from scraped website content stored in the database

- Lead-aware: Contact information is detected in real-time and pushed to the CRM

- Session-persistent: Every conversation is stored with the visitor's identity and program interest

- Streaming: Responses arrive token-by-token via Server-Sent Events for a ChatGPT-like experience

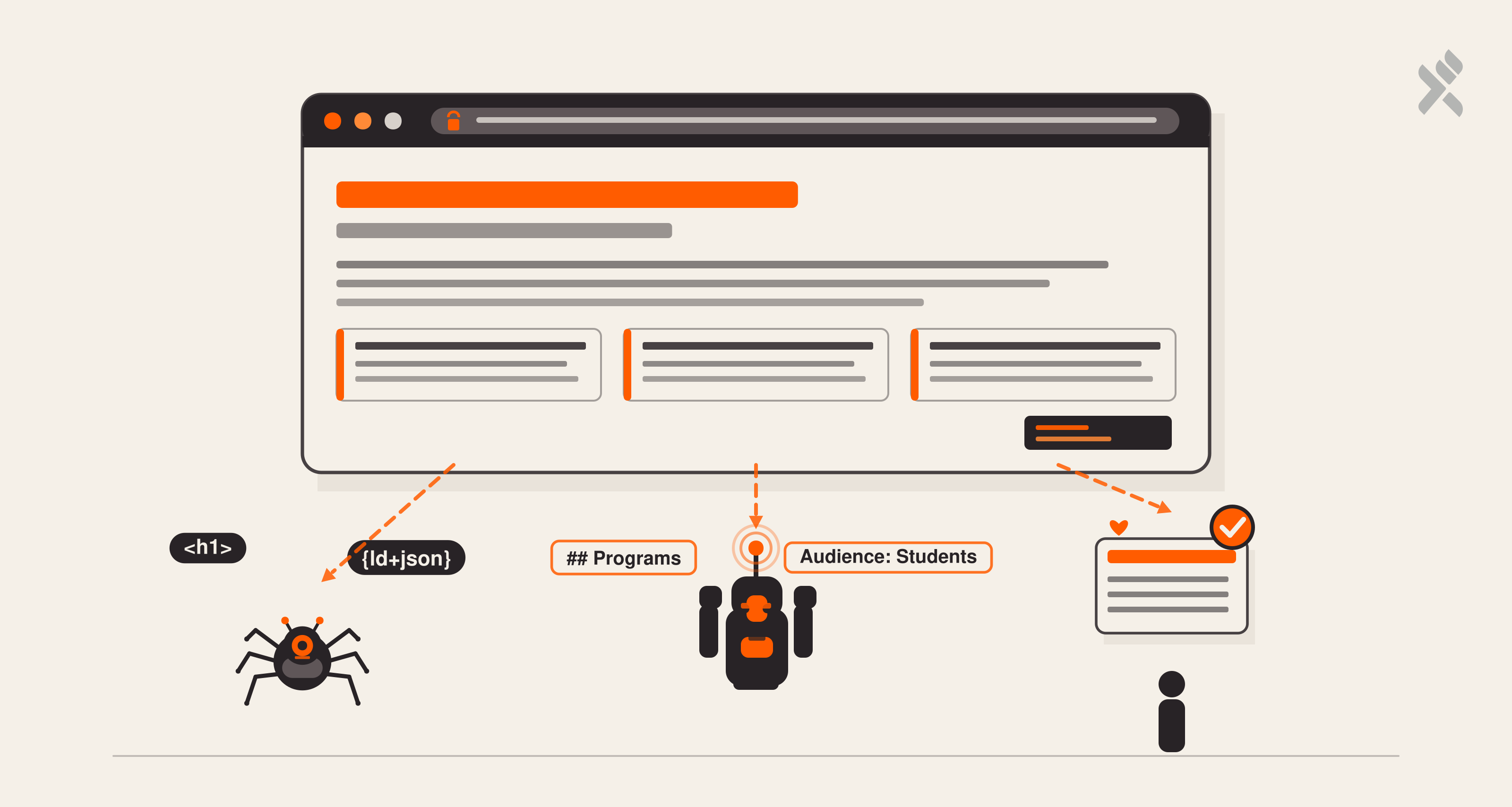

Layer 1: The Self-Refreshing Knowledge Base

Most AI chatbots are built on static prompts. This one stays current automatically.

How It Works

A knowledge refresh pipeline was built, an edge function that scrapes the website, extracts the content as markdown, and stores it in a database table. The chatbot loads this content into its system prompt on every request.

The pipeline:

- 13 pages are defined in a scrape list (homepage, all program pages, audience pages, library, contact)

- Each page is sent to Firecrawl, a web scraping API that returns clean markdown with onlyMainContent: true

- The markdown is truncated to 8,000 characters per page (enough for meaningful context, small enough for the LLM context window)

- Each result is upserted into the website_knowledgetable, keyed by URL

Published Website (13 pages)

→ Firecrawl API (parallel scraping)

→ Markdown extraction + truncation

→ website_knowledge table (upsert by URL)

Why This Matters

When a program page is updated, pricing changes, a new outcome is added, or the curriculum is modified, the chatbot picks it up automatically on the next refresh. No prompt editing, no redeployment, no manual knowledge curation.

The Tradeoff

This is not a vector-based RAG. There's no semantic search across embeddings. Instead, all knowledge rows are loaded into the system prompt, a brute-force approach that works because:

- The total content fits within the model's context window (~100K tokens for Gemini)

- There are 13 pages, not 13,000

- Every page is relevant to potential questions

For a larger site, embeddings and similarity search would be needed. For an EdTech platform with a dozen programs, full-context injection is simpler, faster, and more reliable.

Building this with Lovable was a single conversation: "Build an edge function that scrapes these 13 URLs using Firecrawl, extracts markdown, truncates to 8000 chars, and upserts into a website_knowledge table." The function was generated, tested, and deployed, all within the platform.

Layer 2: The Chat Edge Function

The core of the system is a single edge function that handles every chat interaction.

The System Prompt

The system prompt is constructed dynamically on every request:

BASE_PROMPT (behavior rules + lead qualification flow)

+

KNOWLEDGE_TEXT (all rows from website_knowledge, formatted as markdown sections)

=

SYSTEM_PROMPT (sent to the LLM)

The base prompt defines:

- Tone: Warm, concise, 2-3 sentences unless detail is needed

- Behavior: Ask qualifying questions (role, experience, goal), recommend programs, encourage contact sharing

- Boundaries: Never invent details not in the knowledge base; redirect to the team for unknowns

- Formatting: Minimal markdown, bold for program names, short bullet lists for comparisons

Lead Capture (Non-Blocking)

When a visitor shares their email or phone during the conversation, the edge function detects it and fires a non-blocking CRM sync:

Note the .catch() with no await. The CRM push happens in the background; it doesn't block the AI response. If it fails, the chat continues normally. The visitor never knows.

Program Interest Detection

Every message is scanned for program-related keywords:

The detected program interest is stored alongside the chat session, so the admin dashboard shows not just who chatted, but what they were interested in.

Session Persistence

Every conversation is stored in the chat_sessions table via upsert on session_id:

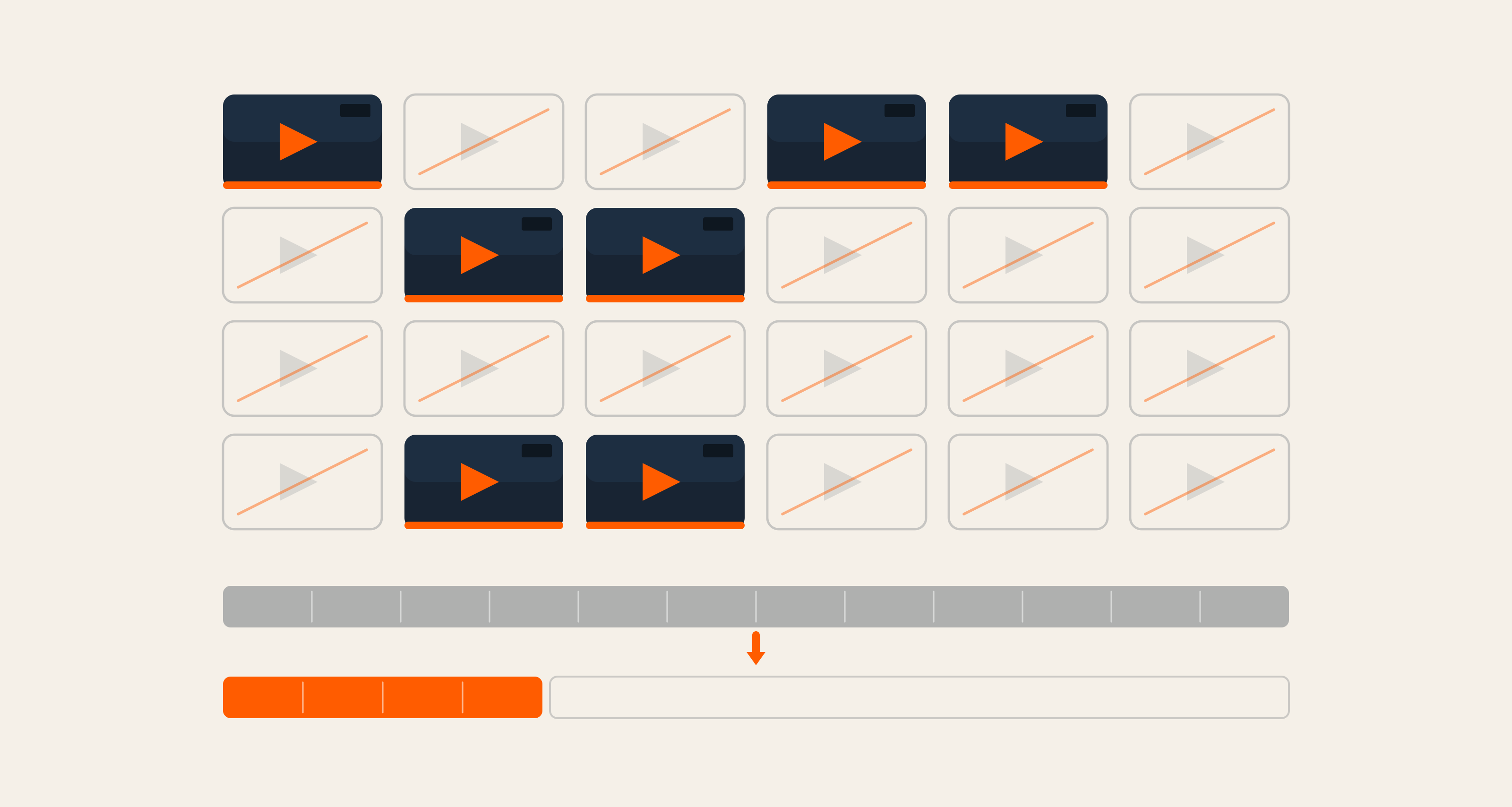

Streaming Response

The AI response is streamed back via Server-Sent Events. The edge function passes the stream: true flag to the AI gateway and pipes the response body directly back to the client:

Layer 3: The React Frontend

Two chat interfaces were built:

1. Floating Widget (ChatWidget)

A small, always-present chat bubble in the bottom-right corner with message history, markdown rendering, auto-scroll, loading indicator, and input field.

2. Full-Screen Chat (FullScreenChat)

A ChatGPT-style full-page interface with suggested questions, expandable textarea, AI avatar, and keyboard shortcuts.

Client-Side Lead Detection

Both interfaces run regex-based contact detection on every user message:

Contact info is accumulated across messages, if a user shares their name in message 3 and their email in message 7, both are captured and sent to the backend together.

SSE Stream Parsing

The streaming response is parsed manually in the browser:

An AI development note: The SSE stream parser was one of the more complex pieces to get right. The initial implementation had a bug where partial JSON chunks would break the parser. The fix was described to Lovable as: "The buffer sometimes contains incomplete JSON lines, we need to only parse complete lines ending with newline." Lovable rewrote the parser with proper line-boundary detection. This kind of iterative debugging, describing the symptom, getting a fix, is the core loop of AI-assisted development.

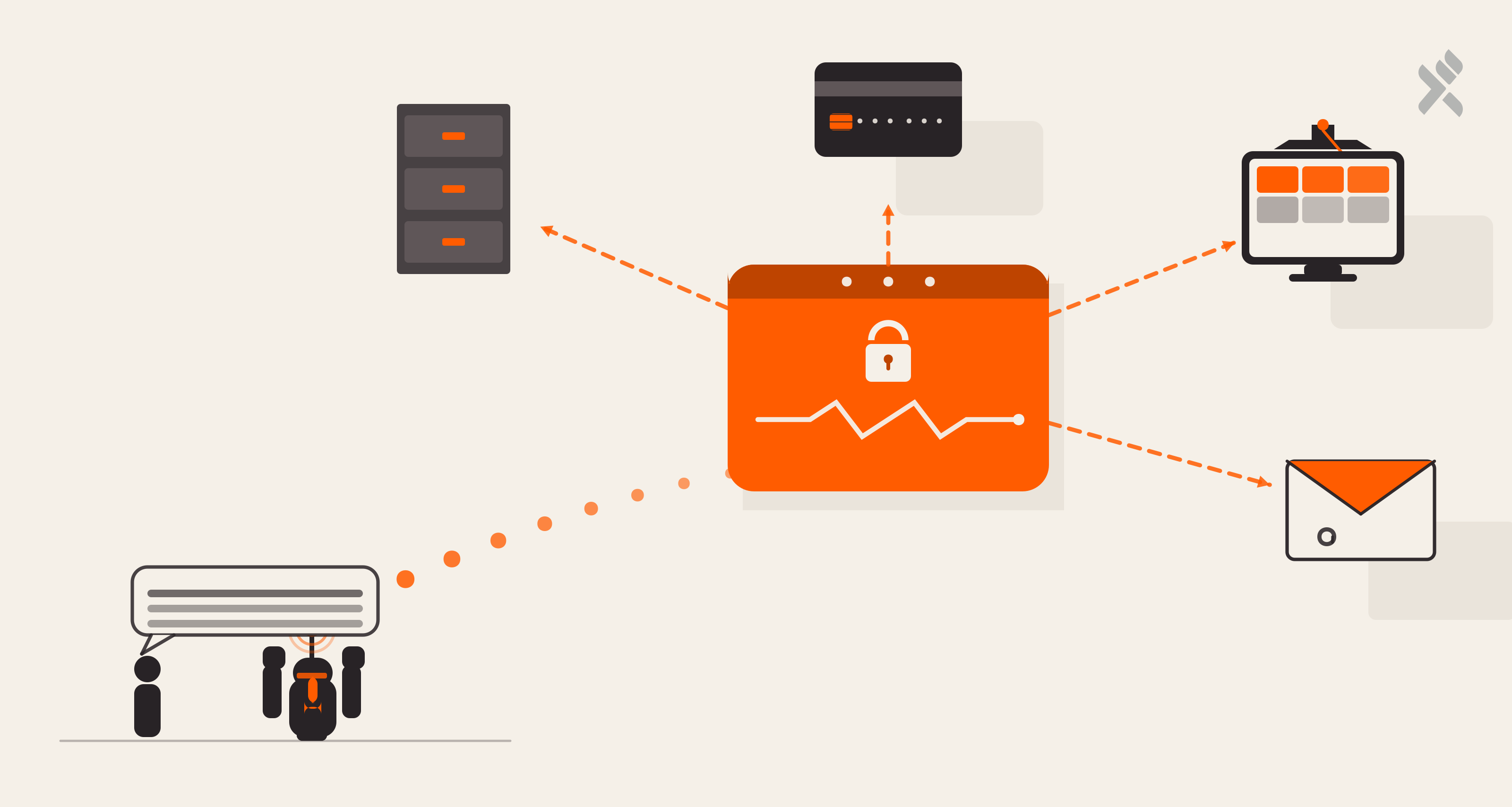

Layer 4: The Voice Agent

The voice agent uses ElevenLabs Conversational AI, a WebRTC-based voice agent that sounds natural and responds in real-time.

The Flow

- User clicks the call button → A pre-call form collects name and phone number

- Microphone permission → Browser asks for audio access

- WebRTC session starts → ElevenLabs agent connects via

@elevenlabs/react SDK - Real-time conversation → Bidirectional audio stream with live transcription

- Contextual awareness → If the user called from a specific program page, the agent focuses on that program

- Call ends → Transcript is sent to the backend

Post-Call Processing

When the call disconnects, the save-voice-call-logedge function:

- AI-Powered Contact Extraction uses Gemini Flash Lite to extract name/email/phone from the transcript

- Database Storage saves full transcript, duration, visitor info, and program context

- CRM Lead Creation creates or updates a CRM lead with the transcript as a note

For voice-only contacts (name but no email/phone), an internal placeholder email (voice-{session_id}@voice-call.internal) ensures the CRM record exists.

Reflections: What Building AI With AI Taught Us

Six things stood out: not as theory, but as decisions made under pressure that held up.

-

Full-context injection beats RAG for small knowledge bases. Thirteen pages fit comfortably inside Gemini's 100K-token context window. There was no need for an embedding pipeline, a vector store, or a similarity threshold to tune. For a site where every page is potentially relevant to every question, loading it all was simpler, faster, and more reliable than retrieval. RAG makes sense at 13,000 pages. At 13, it's overhead.

-

Non-blocking CRM sync is non-negotiable. The

.catch()without anawaitis doing more work than it looks. If the CRM call blocks the response stream, the user notices, a stutter, a delay, a moment where the AI feels slow. Firing it in the background and letting the conversation continue means a failed CRM push becomes a backend log entry, not a broken experience. -

Client-side regex and server-side LLM extraction are better together, not competing. Regex catches structured contact info instantly and cheaply on every keystroke. The LLM extracts it from unstructured speech, "you can reach me on my Gmail", after the call ends. Neither alone is sufficient. Together, they cover the full surface area of how people share contact information.

-

Telling the agent where it is transforms what it can do. Passing the current program page as context isn't a small UX improvement; it changes the nature of the interaction. An agent that knows you're reading about the AI Internship doesn't need to ask what you're interested in. It can skip the preamble and get to the answer. Contextual grounding is the difference between an assistant that feels intelligent and one that feels scripted.

-

Stream everything. Buffered responses feel like the system is thinking. Streamed responses feel like they're communicating. The perceived intelligence of an AI system is partly a function of its timing, and token-by-token delivery, even for short responses, consistently reads as more capable than a delayed full response.

-

Lovable is especially fluent in the patterns AI systems actually use. SSE parsing, LLM prompt construction, async CRM sync, WebRTC integration — these aren't edge cases for Lovable, they're its native vocabulary. Describing the symptom of a broken SSE parser and getting back correct line-boundary detection logic in the next message is what AI-assisted development looks like when the tool is trained on the same stack you're building. The AI knew what you were trying to build before you finished explaining it.

The through-line across all six: the architecture decisions that felt pragmatic in the moment, skip the embedding pipeline, fire-and-forget the CRM, pass the page context, stream by default, turned out to be the right ones. Simplicity, when it's chosen deliberately, compounds.

If you're building something similar or wondering whether AI-assisted development could work for your platform, we'd love to hear about it.

Brahmpreet Singh, Senior Marketing Manager

Brahmpreet Singh is a marketing professional with over a decade of experience in SaaS and B2B content strategy. He enjoys blending research-driven insights with creative storytelling to support meaningful growth in organic traffic and lead generation. Brahmpreet is dedicated to building thoughtful, data-informed marketing strategies that resonate with audiences and drive long-term success.

We respect your privacy. Your information is safe.

We respect your privacy. Your information is safe.

Leave us a comment