Introduction

Enterprise data systems are expected to be both stable and adaptive.

- Stable enough to process millions of events per second without failure.

- Adaptive enough to respond instantly when fraud patterns shift, abuse vectors mutate, or compliance rules change overnight.

In practice, most streaming architectures excel at the first and struggle deeply with the second.

In high-stakes environments, detecting transaction fraud, spotting coordinated bot campaigns, or monitoring compliance speed are the only currencies that matter. Acting seconds late is often indistinguishable from acting too late.

Yet most streaming pipelines remain rigid. Changing a simple threshold still requires a full deployment cycle: commit, build, test, and redeploy. By the time new logic reaches production, the underlying behavior has already evolved.

Our data engineering team built a Streaming Rules Engine using PyFlink and Kafka to address this gap. The goal was to transform static, code-heavy stream processing into a dynamic, configuration-driven intelligence engine that executes complex detection patterns in real time with zero downtime.

The Challenge: The Deployment Tax On Intelligence

Most real-time detection logic today is hardcoded directly into streaming applications. This creates an implicit deployment tax on every decision the business wants to make.

- Operational Friction: A simple rule change, such as lowering a fraud threshold, requires an engineering release cycle.

- State Fragility: Redeploying stateful streaming jobs for minor configuration changes risks downtime and state inconsistencies.

- Limited Complexity: Implementing behavioral patterns like coordinated activity or contextual deviation requires complex, brittle logic that is difficult to evolve.

The result is a tradeoff organizations repeatedly face: stability versus speed. We needed a solution that delivered the raw performance of Flink while providing the agility of a dynamic configuration system. We needed to treat rules as data, not code.

The Solution: A Split-Stream Architecture

The core architectural decision was to separate events from logic.

We engineered a system where:

- The Data Stream carries raw events such as transactions, clicks, or posts

- The Control Stream carries detection logic as data

Both streams flow through Kafka and are consumed by Apache Flink, but they evolve independently. Events are processed continuously, while rules can be updated and applied instantly.

This separation removes the tight coupling between detection logic and deployment cycles, allowing the system to adapt without restarts.

1. Unified Pattern Primitives

Instead of building bespoke logic for each use case, we abstracted detection into three configurable rule types:

Threshold

Threshold rules perform instant, stateless evaluation on individual events.

- Example:

value > 100 - Execution model: In-memory evaluation

- Implementation: Flink

MapFunctionwithout accessing the state backend

Thresholds are fast and predictable, making them suitable for first-pass filtering.

Velocity

Velocity rules detect spikes and trends over time.

- Example:

5 events in 10 seconds - Execution model: Stateful sliding windows

- Implementation:

KeyedProcessFunctionusingListStatefor event history andValueStatefor deduplication

This design ensures correct aggregation even with out-of-order events while maintaining low latency.

Correlation

Correlation rules evaluate events against contextual data.

- Example:

transaction amount > 2x user’s daily average - Execution model: Multi-stream joins

- Implementation: A

KeyedProcessFunctionwith separate state buffers for primary events and context

Late-arriving context is handled by temporarily buffering primary events until evaluation is possible.

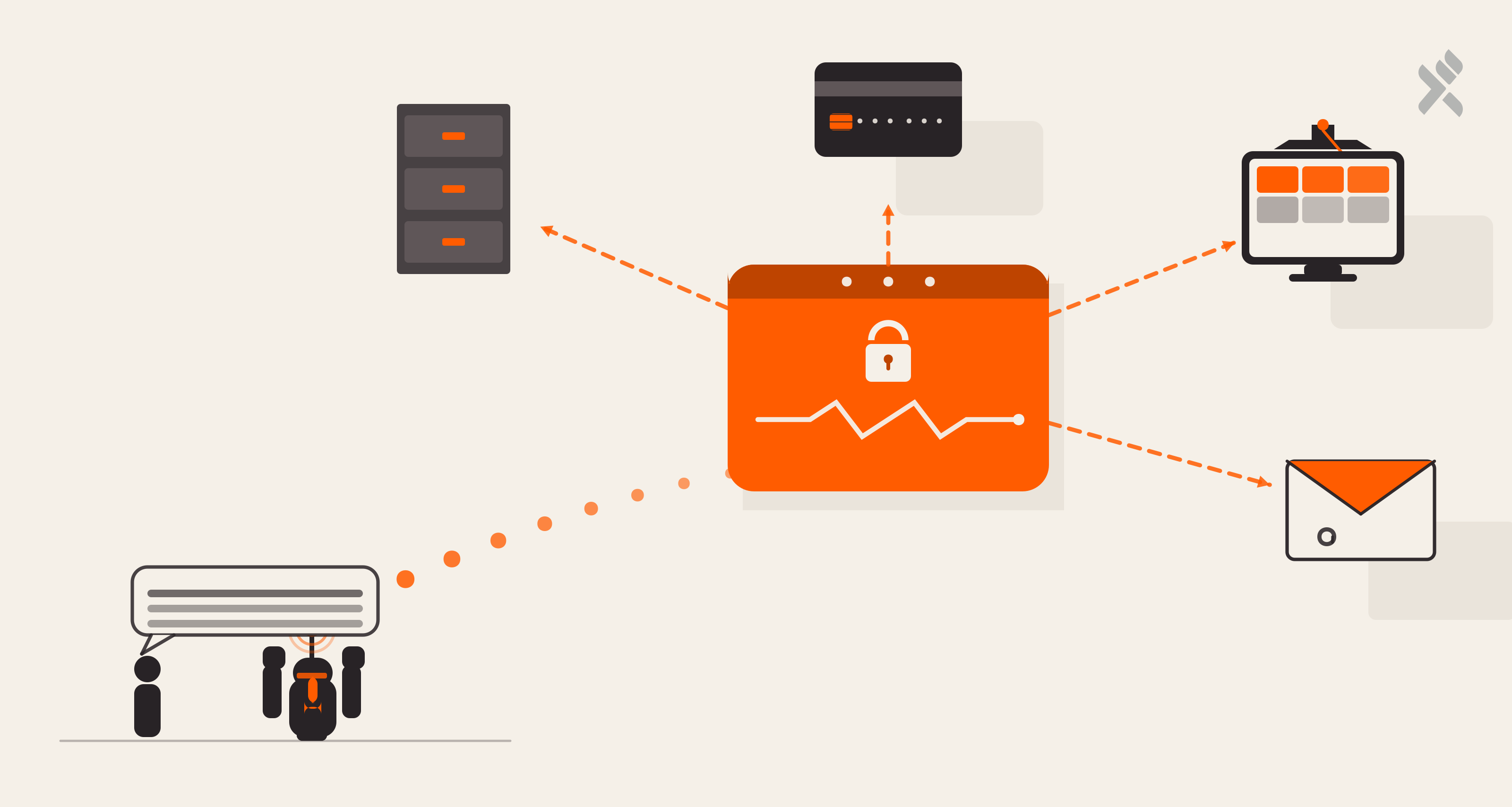

2. Broadcast State: Logic At The Speed Of Data

Instead of burying rules in config files, we publish them to a Kafka topic (rules.active).

- Hot Reload: When a rule is updated, it is broadcast immediately to all Flink operators via the Broadcast State pattern.

- Dynamic Adaptation: The engine ingests the new logic and applies it to the very next event; no restarts, no state loss.

Detecting Coordinated AstroTurf Campaigns

To validate the engine, we applied it to a coordinated behavior scenario commonly referred to as AstroTurfing.

The scenario involved a botnet attempting to artificially amplify a hashtag by flooding it with posts from newly created or low-reputation accounts. While the use case comes from social platforms, the underlying pattern applies equally to fraud rings and coordinated abuse in other domains.

The No-Code Configuration

We deployed a two-stage pipeline using a specific configuration. The first stage acts as a high-velocity filter, feeding the second stage for reputation correlation.

Data Flow

During the test run, the engine ingested hundreds of mixed events from the event stream and asynchronous updates from the context stream.

Input 1: The Event Stream (events.raw)

Each event carries a traceable ID.

Input 2: The Context Stream (context.raw)

User profiles and reputation scores are also ingested asynchronously.

Execution & Results

When high velocity coincided with low reputation, the engine immediately emitted detection signals. Trusted users and low-volume noise were ignored.

Each alert preserved full lineage, including the triggering rule, rule version, and resolved context, enabling traceability and auditability.

The engine ignores posts from trusted users (e.g., user_002, user_004) and low-volume posts from new users. But when the combination occurred, which is high velocity + low reputation, it instantly flagged those specific events from suspected bot accounts like user_003.

Output: Actionable Alert (events.processed)

Each detection preserves the lineage of the input event and the rule logic that caught it.

What’s Next

This work demonstrated that real-time pattern detection need not trade speed for stability. By treating detection logic as data and separating it from event processing, the system executed complex patterns with millisecond latency while remaining safe to modify and operating continuously.

In practice, this meant:

- Agility: Logic updates that previously took days were applied in milliseconds

- Reliability: Exactly-once processing using Flink’s distributed state backend

- Efficiency: A single engine replaced multiple bespoke pipelines

- Scalability: Horizontal scaling to millions of events per second with fine-grained state

This blog focused on the architectural approach and system behavior. Real-time intelligence should aim at shortening the distance between insight and action without compromising reliability. When detection logic can move at the speed of behavior, the system stops reacting to patterns and begins anticipating them.

In the next blogs, we will go deeper into the engineering details behind this engine, including how threshold rules are hot-reloaded, how velocity detection is implemented with state and windows, and how correlation logic handles late data and multi-stream joins in production environments.

Prateek Jain, Director of Digital Solutions & AI Strategy

Offline, if he's not spending time with his daughter he's either on the field playing cricket or in a chair with a good book.

Qais Qadri, Senior Software Engineer

At his core, Qais values honesty and patience. He works with clarity and intention. He leads when needed. He supports without hesitation. Life, for him, is simple—good friends, long drives, meaningful books, and constant self-improvement.

We respect your privacy. Your information is safe.

We respect your privacy. Your information is safe.

Leave us a comment