Introduction

Every enterprise marketing data ecosystem eventually reaches a breaking point.

It rarely announces itself dramatically. There is no outage or catastrophic failure. Instead, it shows up quietly: increased monitoring noise, fragile integrations, slow onboarding of new data initiatives, and engineering teams spending more time maintaining pipelines than building value.

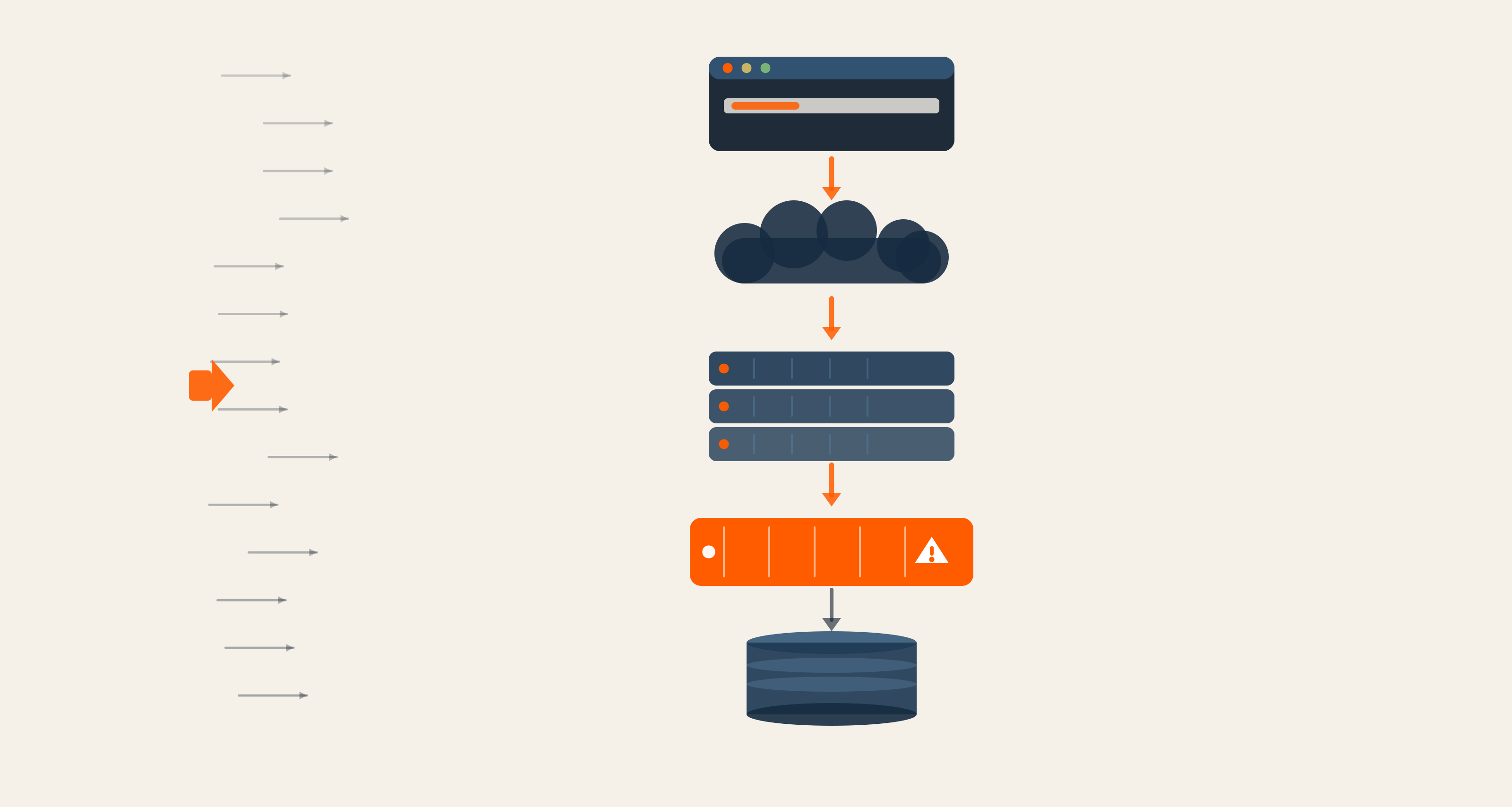

What began as pragmatic engineering decisions: custom Python jobs, Kafka topics, containerized streaming services, and layered ETL tools, slowly hardens into an architecture that resists change.

At that point, modernization is no longer about adding tools. It is about removing them.

This is the story of how a global enterprise marketing ecosystem transitioned away from a custom streaming and batch infrastructure, retiring 25+ Python data jobs, decommissioning more than 150 Kafka topics processing millions of messages, dismantling OpenShift-based streaming services, and consolidating capabilities into core marketing platforms. Not as a shutdown exercise, but as a strategic engineering move to reclaim scalability, reduce operational drag, and prepare for future data and AI initiatives.

The Architectural Pattern: When Custom Integration Layers Outlive Their Strategic Value

Across large enterprises, marketing data ecosystems evolve organically.

A legacy enterprise warehouse, such as Starburst or SSA, feeds downstream marketing platforms. Custom Python scripts manage transformation logic. Kafka topics propagate events across systems. Containerized services run on OpenShift. Informatica workflows coexist with newer cloud-native ETL tools like Matillion and Hevo. Orchestration spans Apache Airflow, Luigi, and GitLab CI/CD. Warehousing is distributed across Snowflake, Google BigQuery, IBM DB2, and SQL Server.

Each component is defensible in isolation. Together, they form a layered integration mesh.

Over time, this mesh becomes harder to reason about. Monitoring is distributed across Slack alerts, Grafana dashboards, OpenShift pod logs, Kafka UI, email notifications, and internal visualization tools. Root cause analysis requires hopping between systems. Ownership blurs. Institutional memory becomes the real dependency.

The system still works, but only because experienced engineers continuously stabilize it.

This is the inflection point.

The Real Risk Of Staying Put

Custom streaming layers built on Kafka and OpenShift often start as strategic accelerators. They enable near real-time synchronization between marketing automation systems, CRM platforms, and data warehouses. They support use cases such as consent management, persona derivation, and contact normalization.

But as core marketing platforms mature, Marketo, Adobe, Sales Cloud, and other enterprise systems, the need for bespoke integration layers diminishes.

What remains is operational overhead.

In one enterprise environment, the legacy footprint included:

- More than 25 Python-based ETL jobs bridging enterprise data platforms and marketing technologies

- A streaming service deployed on OpenShift

- Over 150 Kafka topics processing millions of messages

- Parallel workflows across Informatica Cloud, Matillion, Hevo, DBT, Pentaho, FME Workbench, Gathr, and Alteryx

- Warehousing across Snowflake, Google BigQuery, IBM DB2, and SQL Server

- Orchestration managed through Apache Airflow, Luigi, and GitLab CI/CD

Technically sophisticated. Operationally expensive.

Engineering capacity was increasingly consumed by maintenance, recycling pods in OpenShift, resetting Kafka topic timestamps using custom messages, debugging consumer lag, silencing redundant alerts, and diagnosing failures across distributed monitoring systems.

The architecture was not failing. It was absorbing innovation energy.

Decommissioning As An Engineering Discipline

Decommissioning is often treated as an afterthought. In reality, it is one of the highest expressions of engineering maturity.

Retiring 25+ Python jobs is not trivial when those jobs feed CRM systems, marketing automation platforms, compliance workflows, and reporting dashboards.

Shutting down 150+ Kafka topics is not a simple deletion exercise when those topics may have hidden downstream consumers or embedded assumptions in campaign automation.

The first principle applied in this environment was straightforward: observability before action.

Extensive monitoring was reinforced across batch services, streaming services, and Informatica workflows. Slack integrations were enhanced to push real-time alerts. Grafana dashboards were tuned for clarity. OpenShift pod logs were systematically reviewed. Kafka UI was used to inspect topic-level activity and consumer relationships. Internal tools such as the Nubium Schematic Plotter were debugged and improved to visualize data flow and service architecture.

Only once runtime behavior was clearly understood did phased decommissioning begin.

The Phased Exit Strategy

The modernization strategy did not rely on a single cutover date. It unfolded in deliberate stages.

First came dependency validation. Each Python job and Kafka topic was mapped against active consumers and business capabilities. In collaboration with platform teams, usage was verified. Redundant or obsolete workflows were identified.

Next came controlled isolation. Through GitLab CI/CD pipelines, legacy jobs were commented out rather than immediately deleted. Pipelines were rebuilt and version-tagged. This ensured reversibility. If unexpected downstream behavior emerged, services could be reinstated quickly.

Infrastructure scale-down followed. OpenShift pods supporting the streaming layer were gradually reduced. Alerts were silenced in sequence to prevent monitoring noise from obscuring real issues. Kafka topics were deprecated methodically. Where necessary, timestamp resets were executed using controlled custom messages to manage offsets and consumer state.

Throughout the process, workflow orchestration through Apache Airflow and Luigi ensured dependency management remained intact.

Finally, business capabilities previously handled by custom services, such as contact cleansing, consent logic, and persona derivation, were transitioned into integrated core platforms, including Marketo, Adobe, and Sales Cloud. This reduced duplication of transformation logic and centralized governance within systems designed for those functions.

The objective was not merely to shut down. It was a rationalization.

Platform Rationalization And System Hygiene

Beyond streaming services and batch pipelines, additional integrations were reviewed. Legacy services such as PathFactory integration, Drift integration, and PeopleStream-Salesforce CRM connectors were decommissioned where appropriate. Alerts were recalibrated. Informatica dependencies were validated with platform stakeholders. OpenShift deployments were scaled down responsibly.

The result was a significantly simplified operational surface area: fewer runtime services, reduced alert fatigue, clearer ownership boundaries, and finally, lower maintenance overhead.

Complexity in distributed systems often accumulates invisibly. Removing it restores clarity.

Engineering Maturity In Practice

This initiative was not about replacing Kafka with another tool. It was about evaluating whether a custom streaming architecture still delivered strategic value.

Engineering maturity shows up in several ways:

- The willingness to question historical decisions.

- The discipline to observe before modifying.

- The ability to phase changes without disrupting business continuity.

- The capacity to align infrastructure decisions with long-term platform strategy.

By retiring legacy services, the organization reduced the cognitive load placed on engineers. Instead of managing topic offsets and debugging pod restarts, teams could focus on building forward-looking capabilities within modern data platforms such as Snowflake and Google BigQuery, leveraging DBT transformations, and designing scalable pipelines with Matillion and Hevo.

Most importantly, engineering capacity was redirected toward data and AI initiatives within a unified data environment.

Preparing For Data And AI Readiness

One of the most underestimated impacts of legacy integration sprawl is its effect on future-readiness.

AI initiatives demand clean data contracts, consistent governance, predictable pipelines, and high observability. Custom streaming layers with fragmented monitoring and duplicated transformation logic introduce noise and risk into that equation.

By consolidating capabilities into core platforms and simplifying integration paths, the enterprise created a cleaner foundation for advanced analytics, AI model experimentation, and scalable data product development.

Modernization, in this context, was not just cost optimization.

It was architectural preparation.

Build Phase Reimagined

In digital engineering, the Build phase is often associated with constructing new systems, deploying new environments, or introducing new frameworks.

But sometimes the most strategic build decision is subtraction.

In this case, removing 25+ Python jobs, retiring 150+ Kafka topics, dismantling OpenShift-based streaming services, and consolidating workflows across Matillion, Informatica Cloud, DBT, and Airflow did more to strengthen the architecture than adding another tool ever could.

- The system became leaner

- Governance became clearer

- Engineering focus shifted forward

For technical leaders facing similar patterns, the lesson is not that custom streaming is inherently flawed. It is that every architectural layer must periodically justify its existence.

When it no longer does, disciplined decommissioning becomes the most powerful modernization strategy available.

And when executed with observability, phased control, and platform alignment, it transforms not only infrastructure but engineering culture itself.

If your data ecosystem is becoming harder to scale and maintain, it may be time to rethink what to remove. Contact our team to explore a more sustainable path forward.

Kartik Shukla, Client Engagement Manager II

Kartik’s favorite sports are badminton and cricket. He binge-watches suspense thrillers, cooks with his wife, and spends time with the WordPress community at leisure. He also likes to befriend new people and travel to different places.

We respect your privacy. Your information is safe.

We respect your privacy. Your information is safe.

Leave us a comment