Introduction

In the previous blog of this series, we introduced the detection engine architecture: why static, hardcoded rules create operational drag, and how treating logic as data opens the door to truly dynamic systems. Now we go deeper. This post focuses on the first and most foundational pattern: threshold detection, and the mechanism that makes it useful in production: hot-reloaded rules via Flink's broadcast state.

Threshold Detection

Every real-time detection system begins with thresholds. They are the first line of defense against risk, abuse, and anomalous behavior. They are also the rules that change most frequently.

In most systems, threshold logic is hardcoded or loaded at startup. A small adjustment, a value change, a new condition, or a rule disable triggers a full deployment cycle. This turns the most basic form of detection into an operational bottleneck.

Before tackling more complex patterns like velocity or correlation, the system must first answer a simpler question: can detection logic change while the system is running, without downtime or state loss?

The Naïve Approach And Why It Fails

A common implementation embeds threshold rules directly into streaming code or static configuration files.

This approach breaks down quickly:

- Rules require redeployments to change

- Stateful jobs must restart even for trivial updates

- Operational risk increases with every change

- The engineering team becomes the gatekeepers for routine rule updates

As detection logic grows, this rigidity compounds. What should be a fast decision becomes a slow-release process.

Architectural Approach For Logic To Travel Like Data

To remove this friction, detection logic must be treated as data.

Rules should:

- Arrive continuously, not at startup

- Be versioned and observable

- Apply immediately to new events

- Propagate consistently across all parallel tasks

This requirement leads directly to a dual-stream design: one stream for events, another for rules.

Context: What This Engine Does

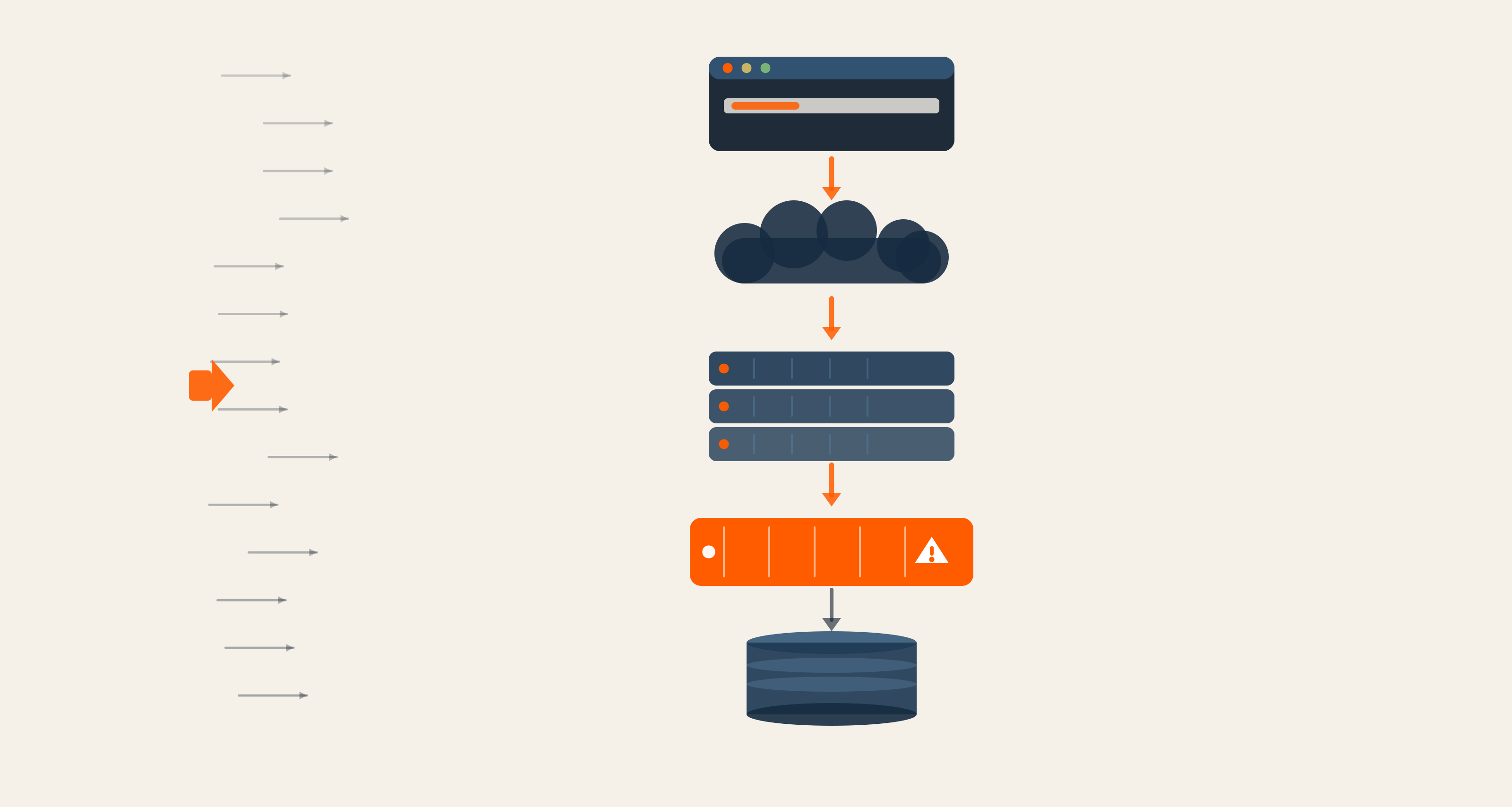

At a high level, the engine:

- Consumes events from Kafka (for example, events.raw)

- Evaluates them against active detection rules

- Emits enriched detections to a sink topic (for example, events.processed)

Rules themselves are streamed from Kafka (for example, rules.active) and hot-reloaded while the job is running.

.png?width=1600&height=1200&name=Body%20Image%201%20(2).png)

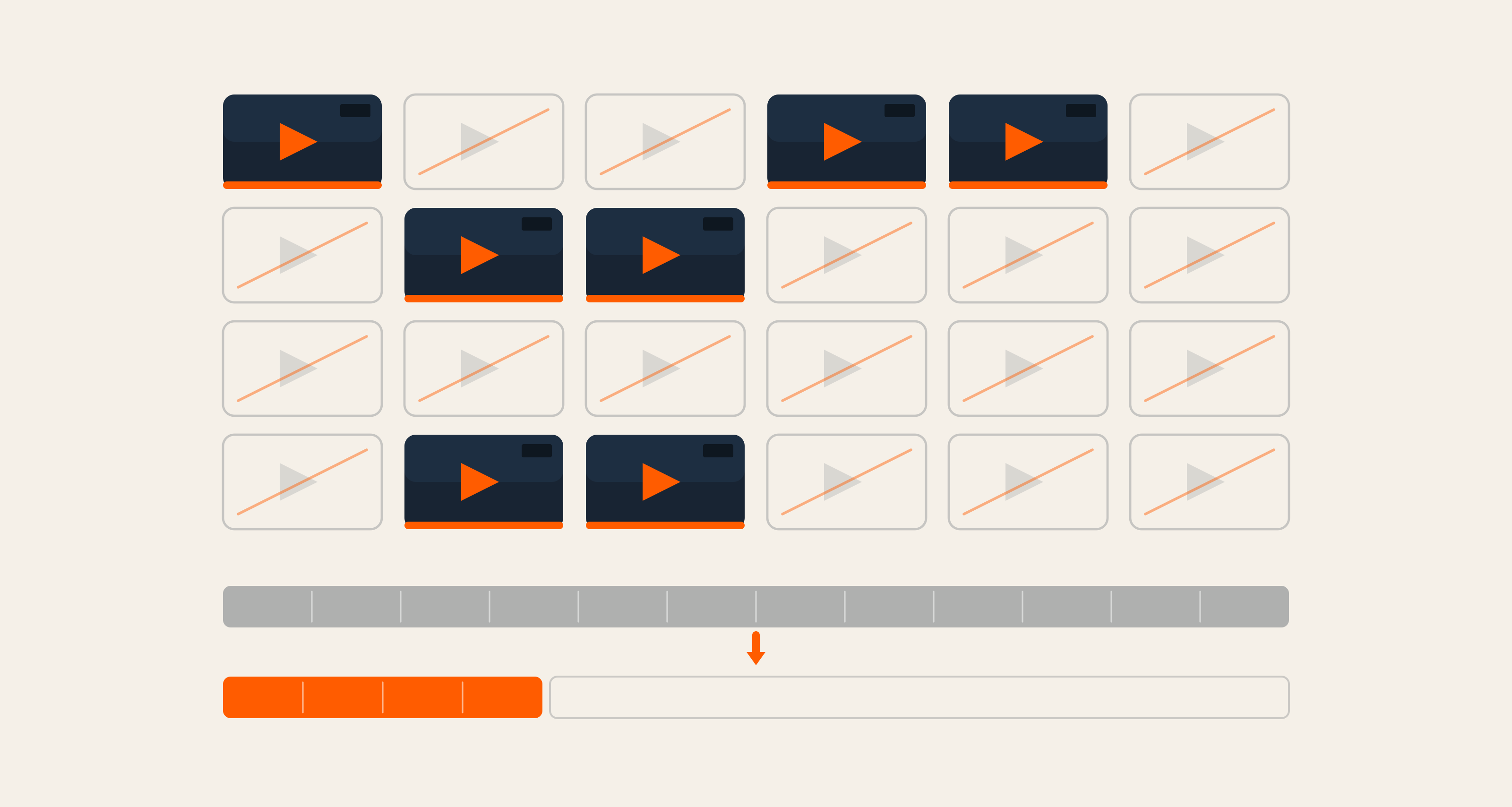

High-level flow (threshold path and beyond)

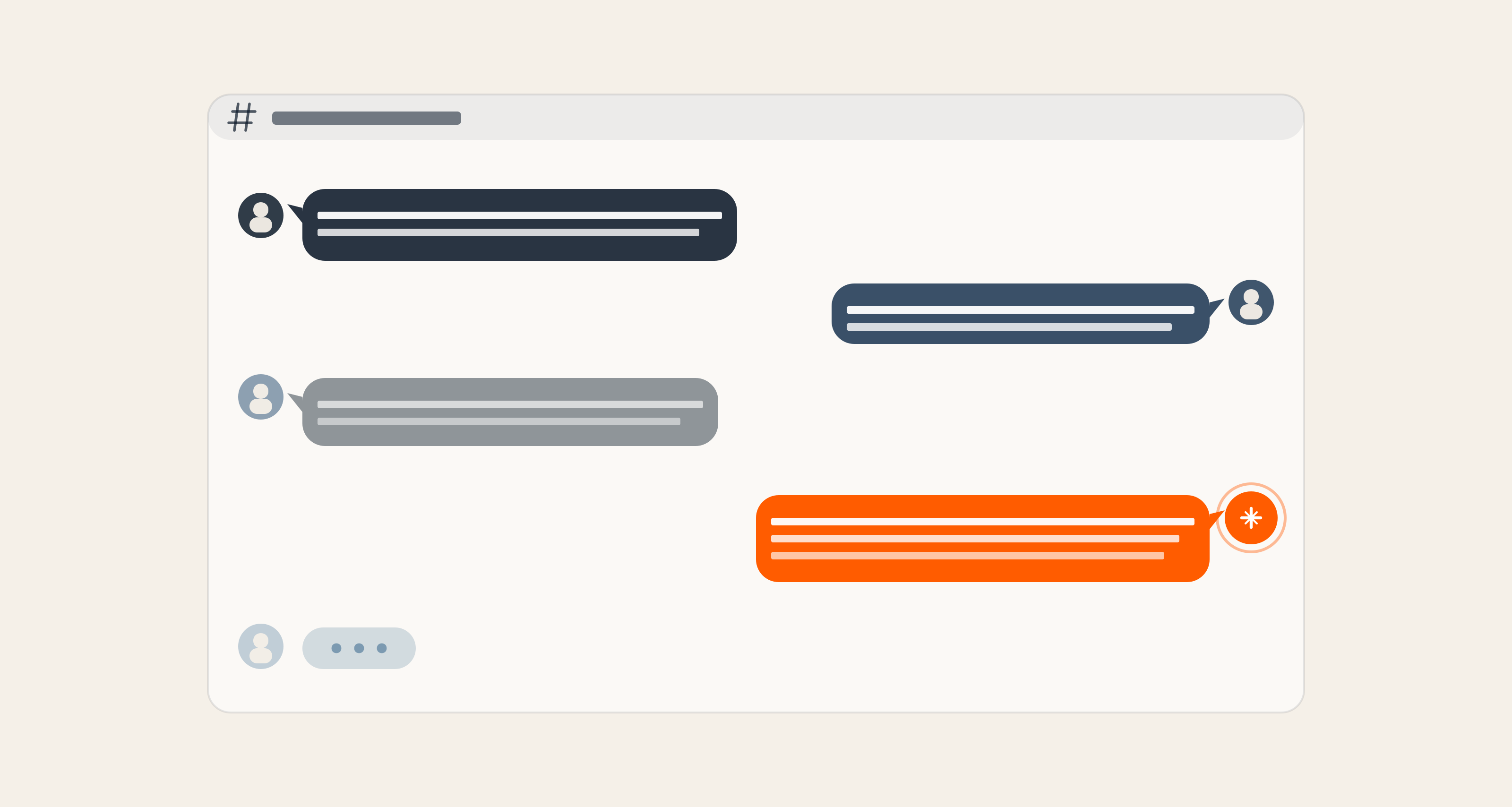

Rule reload (Kafka): how a new rule reaches every task

Flink’s Concepts Explained

This blog series relies on the following Flink/PyFlink concepts. If you are new to Flink, use the table below as a quick reference.

|

Concept |

What is means |

|

A descriptor that tells Flink how to create a key-value state: you give it a name and the key/value types (e.g. STRING, STRING). Flink uses it to create the actual state at runtime. For broadcast state, the same descriptor is used on every task so all tasks share the same logical map. |

|

|

State that is updated from a broadcast stream: every record from that stream is sent to every parallel task, and each task updates its local copy of the state the same way. The result is that all tasks hold the same logical data (e.g. the same set of rules). Ideal for low-volume “control” data (like rules) that must be visible everywhere. |

|

|

A process function that handles two inputs: (1) a normal stream (e.g. events) and (2) a broadcast stream (e.g. rules). You implement |

|

|

get_broadcast_state(descriptor) |

Method on the context (ctx) passed to your |

|

Called on a stream (e.g. the rules stream). Returns a BroadcastStream tied to that state descriptor. When you later |

|

|

connect(...) and .process(...) |

|

|

|

Threshold Pattern

Threshold rules are the simplest and most common form of detection logic, but getting them right at scale requires more than a condition check. Here is how the engine structures and evaluates them.

What Is A Rule? (Structure Common To All Rule Types)

Every rule in the engine shares a common shape:

- Rule_id: Unique identifier (string).

- Version: Version string; when rules are loaded from Kafka, bumping the version triggers hot reload so the job picks up the new definition.

- Rule_type: One of threshold, velocity, or correlation; it determines how the engine evaluates the rule.

- Source_topic: The Kafka topic this rule applies to (e.g., events.raw).

- Optional: Name, description, enabled, tags.

The sink topic (where detections are written) is not set in the rule; it is set per job via --sink-topic (e.g., events.processed). Threshold rules add one more required block: conditions , a list of field conditions that must all be true for an event to match.

What Is A Threshold Rule?

A threshold rule is a set of conditions applied to event fields. If all conditions are satisfied for an event, the event matches, and we emit an enriched version to the sink. No history is kept; each event is evaluated independently (stateless).

Example rule:

Meaning: Emit events where type == "purchase" and value >= 20. Supported operators: ==, !=, >, <, >=, <=. Conditions are combined with AND logic.

An example of matching and non-matching events:

|

Event |

Match? |

Why |

|

|

Yes |

Both conditions true: |

|

|

No |

|

|

|

No |

|

When an event matches, we emit an enriched JSON that includes the original payload plus processed, rule_id, rule_version, rule_type, and optional rule_name.

How We Built It: Flink’s Broadcast State And Our Rule Logic

We use Flink’s BroadcastProcessFunction so that rule updates and events are handled in one place: when a rule arrives, we store it in broadcast state via ctx.get_broadcast_state(descriptor); when an event arrives, we read that state and evaluate threshold rules. Flink’s MapStateDescriptor and broadcast() give us a shared map (rule_id → rule JSON) across all tasks; connect(broadcast_rules).process(...) wires the two streams to our function. Inside that function, we implement rule validation, condition evaluation, and enrichment.

Broadcast Function: Rule Storage And Event Evaluation

We extended BroadcastProcessFunction and used get_broadcast_state in both callbacks. For a rule, we parse, validate with normalize_rule, and add/remove in the state; on an event, we iterate the state and apply threshold rules (velocity and correlation run in separate operators).

Condition Evaluation And Rule Validation

We use get_field_value to read event fields (including nested paths like user.id) and evaluate_condition to compare against the rule’s expected value (with numeric coercion for >, <, etc.). When we receive a rule, we call normalize_rule, which enforces required fields and a non-empty conditions list before we place it in the broadcast state.

Wiring: MapStateDescriptor, broadcast(), And connect().process()

We used Flink’s MapStateDescriptor to describe the shared map, rules_stream.broadcast(rules_state_desc) to turn the rules stream into the broadcast side, and events_stream.connect(broadcast_rules).process(RulesBroadcastFunction(...)) so that Flink delivers rules and events to our function, and we read/write broadcast state.

End-To-End Flow For Threshold

Putting it all together, here is how an event moves through the system from ingestion to enriched output.

- Events are read from Kafka (events.raw) and form events_stream.

- Rules are read from a Kafka topic (rules.active) and form rules_stream (unbounded).

- We create the broadcast state descriptor and the broadcast view of rules:

broadcast_rules = rules_stream.broadcast(rules_state_desc)

so that every rule record is sent to every parallel task and updates the same logical map (rule_id → rule JSON). - We connect events and broadcast rules and process with our function:

threshold_processed = events_stream.connect(broadcast_rules).process(RulesBroadcastFunction(...), ...)

For each event, process_element runs; it reads the broadcast state, loops over threshold rules, evaluates conditions via get_field_value and evaluate_condition, and yields (sink_topic, enriched_json) for each match. - The output stream threshold_processed is later unioned with velocity and correlation results, mapped to a single JSON string (with _sink_topic set), and written to the Kafka sink.

So for threshold only: events stream + broadcast rules → connect → process (RulesBroadcastFunction) → threshold_processed → (later) union → map → sink.

Code Snippet: Condition Evaluation And Emit

Inside process_element, for each rule with rule_type == "threshold" we perform:

One event can match multiple threshold rules; we emitted one (sink_topic, json) per matching rule.

Rule Reload

Hot reload is what separates a production-ready detection engine from a brittle one. Here is how rules flow from Kafka into every running task, without stopping the job.

How Do Rules Get Into The Job?

Rules are loaded from a Kafka topic (e.g. rules.active). The job subscribes to that topic; every message is a rule (JSON). New or updated rules are consumed as they are produced, with hot reload without restart. The rest of the job sees a stream of rule records (JSON strings) that is broadcast and fed into the BroadcastProcessFunction.

Where Are The Rules Stored?

Rules are stored in the Flink broadcast state (refer to the concepts table above).

- We define it with a MapStateDescriptor: a named map with key type STRING (rule_id) and value type STRING (rule JSON). At runtime, Flink creates one such map per parallel instance of the operator; each instance lives in a TaskManager (state in memory or in the configured state backend).

- Because the rules stream is broadcast, every rule record is delivered to every instance. Each instance runs process_broadcast_element and does state.put(rule_id, json.dumps(rule)) (or state.remove(rule_id) if the rule is disabled). So every instance’s map is updated the same way , they all hold the same logical set of rules.

So: rules are stored in the broadcast state map (rule_id → rule JSON) maintained by each task. There is no separate external “rules store”; the map is the rules store for the duration of the job.

How Does Reload Work Internally?

“Reload” here means “the next event should see the new rule.” There is no special reload API; we just have to update the same map.

- A new or updated rule is produced to rules.active (e.g. same rule_id, updated conditions).

- Flink’s Kafka source for the rules topic reads the record and emits one element on the rules stream.

- That stream is the broadcast side of the connected stream, so Flink sends that element to every parallel instance of the operator.

- Each instance runs process_broadcast_element(value, ctx): we parse the JSON, normalize the rule, get the broadcast state with

ctx.get_broadcast_state(rules_state_desc), and thenstate.put(rule_id, json.dumps(rule))(orstate.remove(rule_id)if disabled). - The next event that hits any instance triggers process_element; it calls state.items() and sees the updated rule. Evaluation uses the new definition immediately.

So internally: reload = new Kafka message → broadcast to all tasks → each task’s process_broadcast_element updates its broadcast state map → subsequent events see the new rules. No restart, no separate reload step.

Flink Concepts Used For Rule Reload

|

What we achieve |

Flink concept / API |

|

Rules from Kafka (ongoing) |

KafkaSource + from_source(...) , unbounded stream |

|

Same rules on every task |

MapStateDescriptor + stream.broadcast(descriptor) + broadcast state in BroadcastProcessFunction |

|

Connect events + rules, process both |

events_stream.connect(broadcast_rules).process(RulesBroadcastFunction(...)) |

|

Read/update the shared rules map |

ctx.get_broadcast_state(descriptor) then state.put / state.remove / state.items() |

What This Pattern Gets You And Where It Goes Next

Here is a quick recap of what the threshold pattern and broadcast state give you, and a look at what the rest of this series will build on top of it.

- Threshold: Stateless filtering via a list of conditions (field, operator, value). Implemented inside a BroadcastProcessFunction that reads rules from broadcast state and uses

get_field_valueandevaluate_condition; Flink provides the broadcast state (via MapStateDescriptor and broadcast) and the two-input process function contract. - Rule reload: Rules are stored only in broadcast state (a map per task, kept in sync by the broadcast stream). Rules are loaded from Kafka (KafkaSource / from_source); producing a new message to the rules topic updates the map on all tasks, and the next event sees the new rule, no restart required.

In the next post, we’ll cover the velocity pattern (keyed state, windows, aggregations, and optional side output for correlation). The third post will cover the correlation pattern (primary + context streams, lookback, and metrics).

Building real-time detection systems and want to talk architecture? If you're exploring Flink, Kafka, or composable data pipelines for your platform, our team at Axelerant is happy to help.

Qais Qadri, Senior Software Engineer

Qais enjoys exploring places, going on long drives, and hanging out with close ones. He likes to read books about life and self-improvement, as well as blogs about technology. He also likes to play around with code. Qais lives by the values of Gratitude, Patience, and Positivity.

We respect your privacy. Your information is safe.

We respect your privacy. Your information is safe.

Leave us a comment