Introduction

Most teams use AI to write code faster. We used it to make delivery more predictable, encoding that into two systems: an open-source Claude Code plugin that runs inside the engineer's loop, and an in-house Slack-native agent that runs across the engagement.

The interesting question for engineering leaders right now isn't whether AI can write code. The question is whether AI can deliver a project the way you'd want one delivered: spec before build, validation before commit, tests before PR, an audit trail a sponsor can read, and the engagement context preserved from the first kick-off call to the production rollout six months later.

Most "AI in engineering" pitches sell speed: write a function in seconds, generate a test with a click. For a single developer, that's real productivity. For a delivery organization running dozens of concurrent engagements, it's rarely the constraint.

The constraints that matter are consistency and the slow loss of context over an engagement's lifetime. Specs get skipped because the deadline is loud. Config gets hand-edited because the deploy is hot. Tests get postponed because the demo is tomorrow. Decisions made in a Slack thread on Tuesday can't be found on Friday. Senior engineers hold a project's patterns and a client's hidden constraints in their heads, and take both with them when they rotate.

At a steering committee, the questions are:

- How do we know the work was specified before it was built?

- How do we map a production change back to a business decision six months later?

- How do we maintain consistent delivery quality when the team rotates?

Speed rarely comes up. AI without governance amplifies the gaps, because where a human stops to ask questions mid-build, an AI agent confidently produces the wrong thing.

So we didn't start with autocomplete. We started with the delivery floor and with the engagement memory underneath it. The Drupal SDLC plugin runs inside the engineer's loop, from ticket to merged PR. Bott, our Slack-native engineering agent, runs across the engagement, from the first scoping call onward. Each is useful on its own, and together they cover the two layers of delivery that most tooling leaves uncovered.

The Drupal SDLC Plugin: Encoded Delivery, Inside The Engineer's Loop

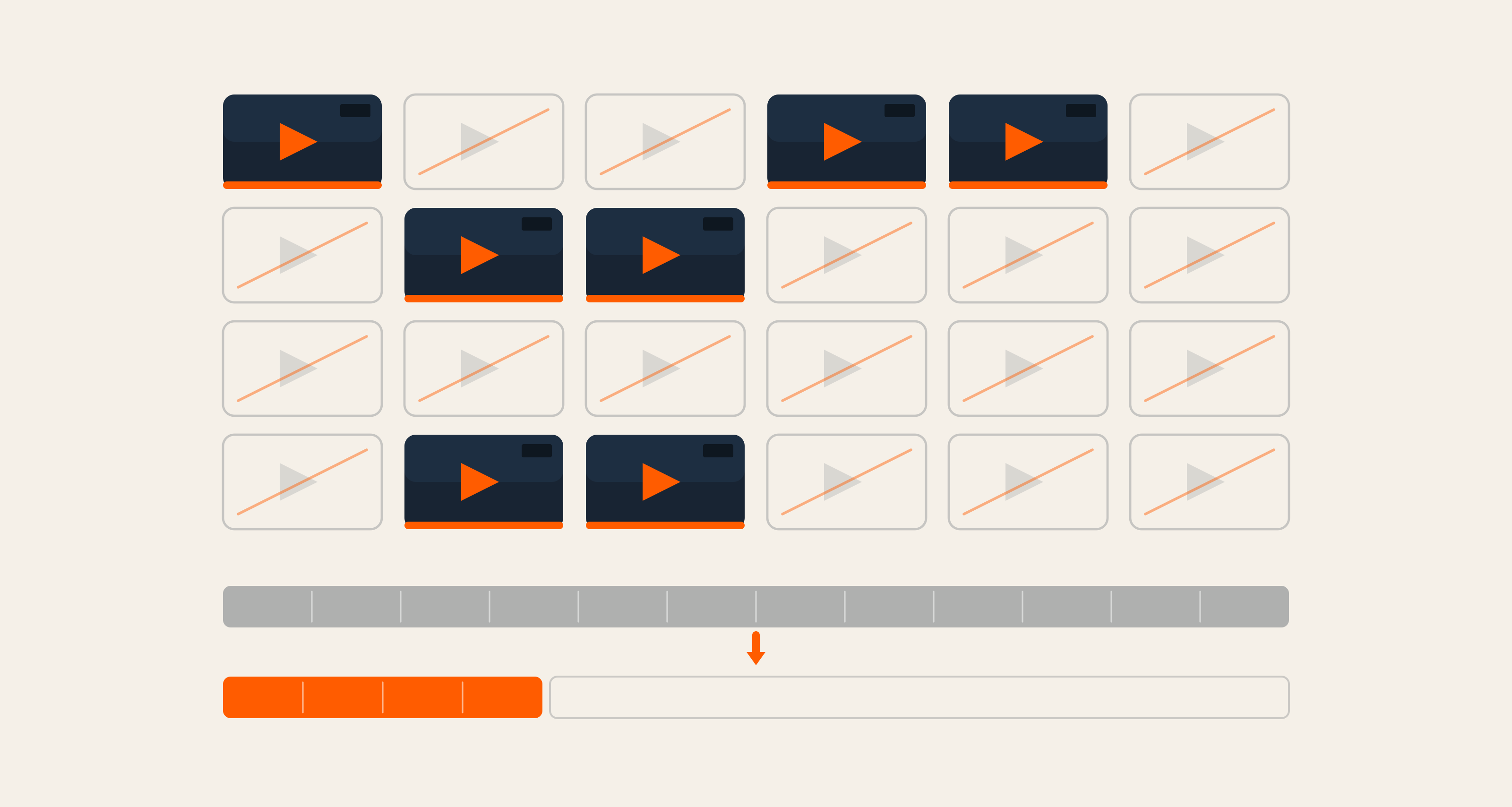

drupal-sdlc-plugin is our open-source Claude Code plugin that encodes how Axelerant delivers Drupal projects. It's on GitHub, free to install, and documented end-to-end. There are eleven skills across four layers: planning (spec writing), build (config generation, module scaffolding), quality (validation, test generation, lint repair), and delivery (PR creation, automated review, hotfix handling).

Any skill is usable on its own, and they also compose. One command, work on Jira ticket KEY-X, runs the full lifecycle: read the ticket, write a spec, wait for human approval, post the spec back to Jira, transition the ticket, create a feature branch, implement, validate, run every test, raise the PR with a risk assessment, run an automated review, and post the PR link back to Jira.

Two human checkpoints sit inside that loop on purpose: spec approval and PR merge. We could have built this to run end-to-end without intervention, but we deliberately chose otherwise. A spec that looks correct to an AI can still miss business context known only to the engineer on the engagement, and a PR that passes every check can still be the wrong architectural call. Removing those checkpoints would have produced a faster cycle and a worse delivery posture.

What the plugin enforces is the discipline senior engineers carry in their heads:

- Specs come before code, with numbered acceptance criteria, test mapping, and named Drupal constructs.

- Best practices are enforced: dependency injection over

\Drupal::service()in classes, defined route permissions, CSRF on forms, and input sanitization on user data. Security checks happen before a senior engineer opens the PR. - Config discipline is strict. Every change goes through a clean round-trip (import, export, status check) before commit; UUIDs and _core keys are never hand-edited.

- Tests are written upfront. No PR ships with a failing test, and hotfix mode still runs validation.

- Protected files stay protected.

settings.php,*.installconfig_splitYAMLs are guarded by pre/post hooks that block unapproved writes and syntax-check every edit. - Learnings stay captured. Project patterns worth keeping go back into the skill or a project-level override, so the next ticket and the next engineer inherit them rather than rediscover them.

Every PR becomes a complete deliverable. It contains the Jira link, change summary, file-by-file rationale, test plan, automated test coverage, risk assessment, and a link back to the approved spec. Every numbered acceptance criterion is mapped to an implementation and a test. Six months later, when a sponsor asks why a particular change was made, the artifact answers the question.

Three-quarters of what the plugin enforces has nothing to do with Drupal. A senior engineer ported it to Mautic and Laravel in under two hours. A site builder used config-builder on its own to generate Site Studio components as pure YAML. A delivery lead on one of our largest CDM engagements layered domain context on top and used the plugin as the foundation. The platform layer is thin, and the delivery logic is portable.

Access Axelerant's Open-Source Drupal SDLC Plugin

Bott: Engagement Memory, From The First Call To The Next PR

The plugin solves the engineer's loop, but it doesn't solve the engagement's loop, and that's where most context loss in delivery actually happens: decisions made in the kick-off, a constraint mentioned off-record on a discovery call, an architectural choice taken in a thread nobody re-reads, a Sentry issue everyone agreed to triage and nobody picked up, the tribal knowledge a senior engineer carries to the next account.

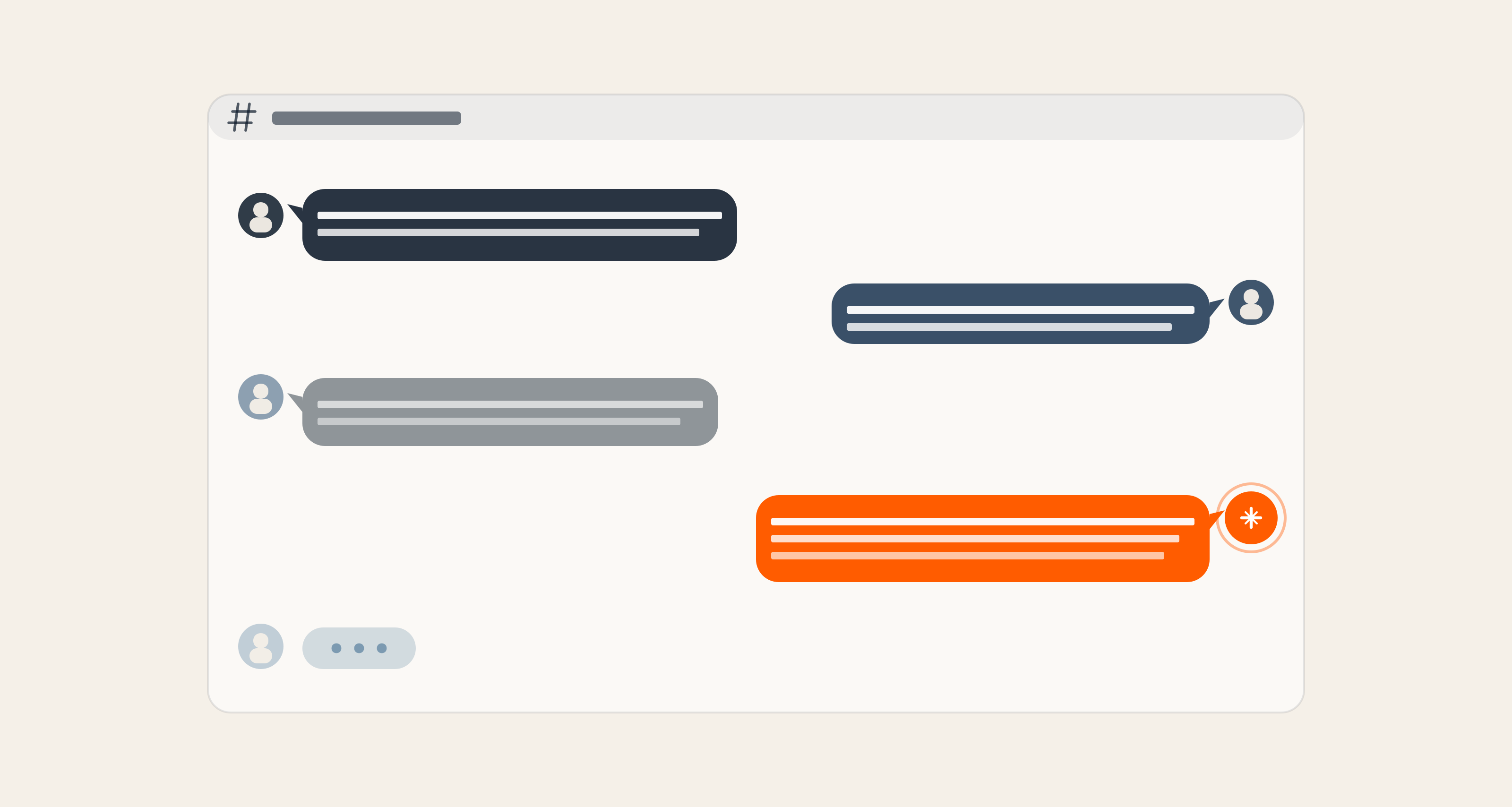

Bott is the layer for that.

Bott is our in-house Slack-native engineering agent, built and operated by Axelerant for the engagements we run, that joins the project on day one. You connect it to the project's Slack channel (the one where the actual conversation happens), and from that point forward it captures the work as it unfolds: discovery call notes, pre-read documents, architecture decisions made in thread, pain points raised by the client, and the reasons behind a "let's not do it that way" that would otherwise live only in someone's memory.

Bott is also connected to the codebase. With every iteration and every new PR, the codebase context stays current, and conversations and code are contextualized against each other. A discussion about a flaky workflow on Tuesday gets linked to the file it concerns. A decision recorded in a thread is linked to the resulting implementation. Bott becomes the single interface where the engagement, the codebase, and the project's institutional memory meet.

That foundation is what keeps the autonomous behavior useful instead of noisy. From inside the channel, Bott can:

- Run automated code reviews on PRs against the full engagement context, drawing on the decisions and constraints that shaped the change as well as the diff itself.

- Triage incoming Sentry issues against the codebase, surface likely causes, and propose fixes.

- Open pull requests directly from a Slack instruction.

@bott work PRM-456turns a Jira ticket into a feature branch, an implementation, a PR, and a comment back on the ticket, so nobody has to drop into a local environment. - Update Jira tickets and post the status back to the channel, keeping the audit trail up to date as work happens.

- Surface insights over time: technical debt that's accruing, areas that need a POC, and concerns the team has been circling around in conversation but hasn't yet acted on.

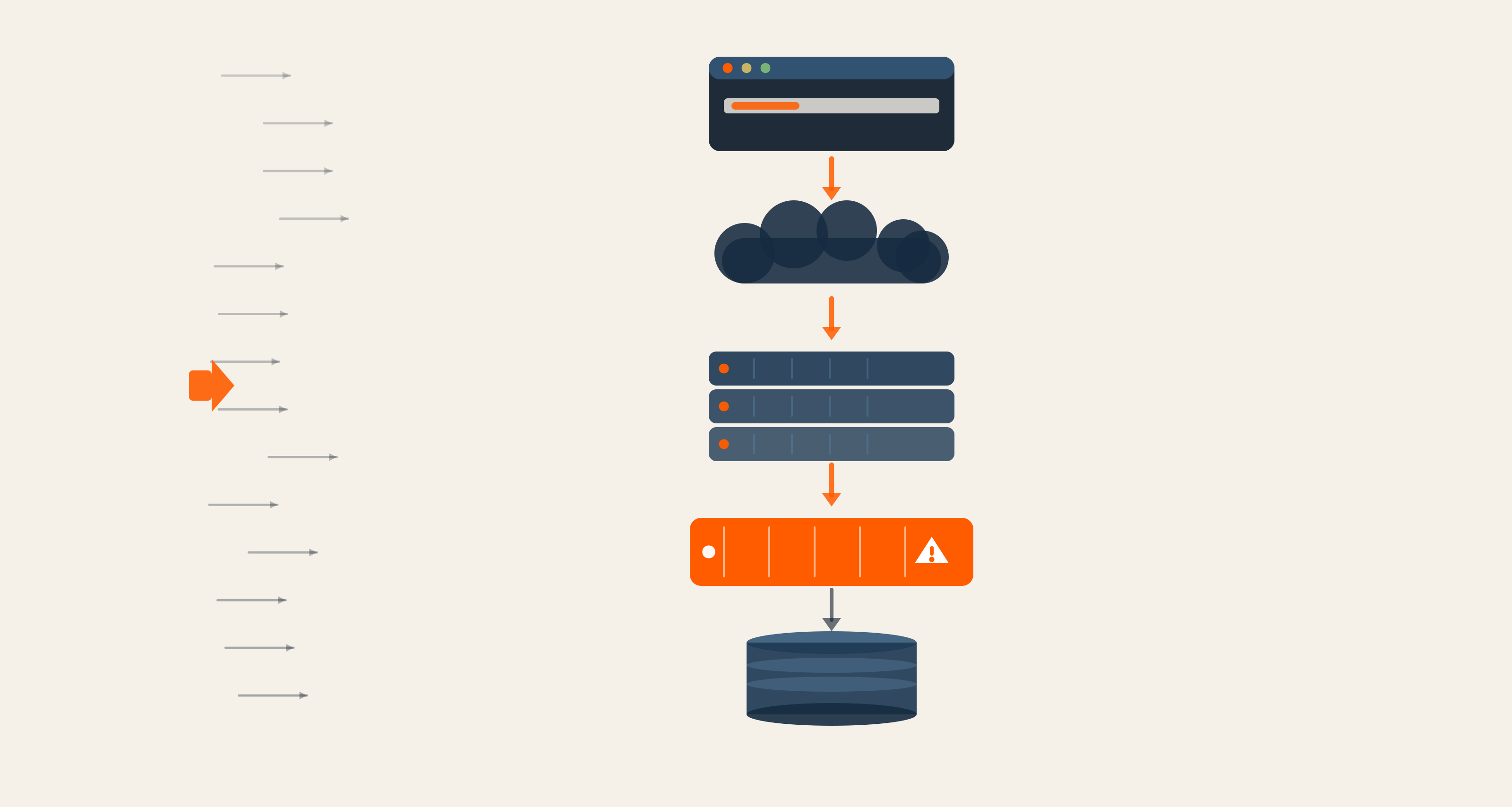

Because Bott aggregates across Slack, the codebase, Jira, and Sentry, it can also report at the level a steering committee uses: DORA metrics on delivery flow, AI adoption inside the team (including where it's working and where it isn't), and concrete suggestions for where the engagement should move next.

Governance is built in. Per-tenant tokens, per-tenant tools, and default-deny on every mutating action, with structured JSON audit logs of every attempt. The Agent is built with the tenant's exact tools and credentials, so there's no shared workspace and no global tool that gets filtered down.

The shape that emerges is different from that of a typical AI assistant in a sidebar. Bott is the engagement's memory and its hands.

How The Two Systems Compose

The plugin enforces how a piece of work gets delivered. Bott carries the context that decides what should be delivered and why. When an engineer runs the plugin on a ticket, Bott contributes the engagement context against which the spec is being written. When Bott raises a PR autonomously from Slack, the plugin's discipline shapes the PR. The engineer's loop and the engagement's loop stop drifting apart.

This is the posture we wanted: AI-native delivery, in production, with your governance, across the line of code and across the engagement.

To make this concrete, the two of us walked through both systems together on a real Drupal project: reading a Jira ticket, generating specs, implementing features, validating tests, raising PRs, and running AI-assisted code reviews. The full conversation is below.

What This Means For Engineering Leaders

Three implications, whether or not you ever install either system.

AI-native delivery is now a question of predictability rather than acceleration. The teams that earn the trust of enterprise buyers over the next eighteen months will be the ones whose AI use is encoded, governed, and auditable across the whole engagement, including outside the IDE.

Delivery discipline is portable. Three-quarters of what the plugin enforces has nothing to do with Drupal, and the same architecture applies to any platform. We open-sourced the Drupal version, and the pattern is yours to adopt on whatever stack you run.

The most useful place for AI in delivery sits above the line of code, at the floor of the engagement, and in the memory across it. The plugin is the floor, the minimum delivery standard every engineer is held to, regardless of seniority. Bott is the memory, the institutional context that no rotation can take with it. Senior engineers add judgment on top of both.

Where To Take This From Here

The plugin is open-source and free for anyone to try. It lives on GitHub, ready to be cloned, installed, and run against your own delivery floor on whatever stack you build. Bott is the engagement-memory layer we operate within our own delivery; if you want to see what that looks like on a project with us, talk to us.

If the demo above raised questions specific to your stack or team, get in touch, and we'll set up a live walkthrough of a project shape closer to yours.

.

Bassam Ismail, Director of Digital Engineering

Away from work, he likes cooking with his wife, reading comic strips, or playing around with programming languages for fun.

Pulkit Tyagi, Software Engineer

Pulkit is a timewalker: biker at dawn, codeweaver by day, and sci-fi philosopher by night. From roadside chai to smart contracts, he blends curiosity and creativity to build tech that teaches and empowers.

We respect your privacy. Your information is safe.

We respect your privacy. Your information is safe.

Leave us a comment